As machine learning models and datasets grow, single-machine training often becomes too slow, too memory-constrained, or too operationally fragile for practical use. Scalable training addresses this by distributing computation, data, model state, or orchestration across multiple CPUs, GPUs, or nodes. This whitepaper explains the foundations of distributed training and focuses on two influential practical ecosystems: Horovod and Ray.

Abstract

Modern machine learning systems increasingly require large-scale training for deep neural networks, gradient-boosted ensembles, hyperparameter search, reinforcement learning, and large data pipelines. Distributed computing enables these workloads to scale by parallelizing data processing, gradient computation, parameter synchronization, or task execution. This paper explains why scalable training is needed, how distributed training differs from simple multi-threading, and what trade-offs arise in synchronization, communication, fault tolerance, and convergence. It covers data parallelism, model parallelism, pipeline parallelism, synchronous and asynchronous optimization, communication collectives, all-reduce strategies, speedup and efficiency, and the operational roles of Horovod and Ray. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Suppose a training objective is:

θ* = argminθ (1/N) Σi=1N L(f(xi; θ), yi),

where:

θare model parametersNis the number of training samplesLis the loss function

On a single machine, training iteratively computes gradients and updates parameters. When

N is large, θ is large, or compute demand is high, a

single device may no longer be sufficient.

2. Why Scalable Training Is Needed

Scalable training becomes necessary for several reasons:

- datasets are too large to process efficiently on one machine

- models are too large to fit in one device memory

- training time is too slow for iteration velocity

- hyperparameter search requires many parallel trials

- production retraining must meet time or cost constraints

In large-scale ML, training system design becomes as important as model design.

3. Forms of Parallelism

Distributed training usually relies on one or more forms of parallelism:

- data parallelism: replicate the model, shard the data

- model parallelism: shard the model across devices

- pipeline parallelism: partition layers or stages across devices

- task parallelism: execute independent training jobs or sub-workflows concurrently

4. Data Parallelism

In data parallelism, each worker holds a copy of model parameters

θ and processes a different minibatch shard. If there are

W workers, each worker computes a gradient

gw on its local minibatch.

The aggregated gradient is often:

g = (1/W) Σw=1W gw.

The parameter update then becomes:

θ := θ - ηg,

where η is the learning rate.

5. Model Parallelism

In model parallelism, the model itself is partitioned across devices because it cannot fit into one device’s memory or because compute must be distributed across different model components.

If the parameter vector is decomposed as:

θ = [θ1, θ2, ..., θK],

then different parameter blocks may live on different devices. Forward and backward passes must then coordinate data

transfer across those partitions.

6. Pipeline Parallelism

Pipeline parallelism splits the model into sequential stages and assigns each stage to a different device. Different minibatches can be in flight simultaneously through different stages.

If the model is decomposed into

f = fm ∘ ... ∘ f2 ∘ f1,

then each fk may run on a different device. This reduces memory pressure but

introduces scheduling complexity and possible pipeline bubbles.

7. Synchronous vs Asynchronous Training

7.1 Synchronous Training

In synchronous distributed training, all workers compute their local gradients and then synchronize before updating parameters. This means every worker uses the same parameter version at each step.

7.2 Asynchronous Training

In asynchronous training, workers may update parameters independently or communicate with some delay. This improves utilization in some settings but introduces staleness, because some gradients may be computed on older parameter versions.

8. Gradient Aggregation

A central operation in distributed training is gradient aggregation. If worker gradients are

g1, g2, ..., gW,

then a common synchronized update uses the mean:

g = (1/W) Σw=1W gw.

The mean preserves learning-rate semantics similar to single-worker minibatch training, though optimization behavior still changes as global batch size increases.

9. Effective Batch Size

If each worker processes local batch size b and there are

W workers, the global batch size is:

B = Wb.

This affects optimization dynamics. Very large

B can reduce gradient noise and improve throughput, but may hurt generalization or

require learning-rate scaling strategies.

10. Learning Rate Scaling

In data-parallel training, practitioners often increase learning rate when increasing global batch size. A common

heuristic is linear scaling:

η' = Wη,

where η is the original learning rate and

W is the worker count.

This is not universally optimal, but it is a common practical starting point, especially with warmup schedules.

11. Communication Cost

Distributed training performance depends not only on compute time but also on communication time. If one iteration

takes compute time Tcomp and communication time

Tcomm, then step time is approximately:

Tstep = Tcomp + Tcomm.

Scaling is good only if adding workers reduces compute cost faster than communication overhead grows.

12. Speedup and Parallel Efficiency

If single-worker training time is T1 and

W-worker training time is TW, then speedup is:

S(W) = T1 / TW.

Parallel efficiency is:

E(W) = S(W) / W.

Ideal scaling would give S(W) = W and

E(W) = 1, but in practice communication and synchronization reduce efficiency.

13. Amdahl-Style Perspective

If a fraction p of a workload is perfectly parallelizable and the rest is not, then

speedup is bounded. A classical Amdahl-style approximation is:

S(W) = 1 / ((1-p) + p/W).

While ML systems do not always match this exactly, the intuition remains important: bottlenecks outside the parallelized section limit total gain.

14. Parameter Server vs All-Reduce

Two major synchronization patterns are common in distributed training.

14.1 Parameter Server

In parameter-server architectures, dedicated nodes store parameters and workers push gradients or pull updated weights. This can be flexible, especially for asynchronous designs, but the server can become a communication bottleneck.

14.2 All-Reduce

In all-reduce architectures, workers directly participate in collective communication to aggregate gradients. Each worker ends with the same reduced gradient without relying on a central parameter server. This is especially common in synchronous GPU training.

15. Ring All-Reduce

A common collective strategy is ring all-reduce, where workers are arranged logically in a ring and exchange gradient chunks in phases. This can improve bandwidth utilization and avoid central bottlenecks.

Horovod is widely associated with efficient all-reduce-based distributed deep learning.

16. Fault Tolerance

Distributed training increases failure surface area. Worker nodes may fail, network links may degrade, or long jobs may be interrupted. Scalable training systems therefore need:

- checkpointing

- restart logic

- elastic membership or retry strategies

- state recovery for optimizers and schedulers

If checkpoint interval is too infrequent, much computation may be lost on failure. If too frequent, checkpointing overhead becomes high.

17. Horovod Overview

Horovod is a distributed deep learning framework designed to simplify and accelerate synchronous data-parallel training across multiple GPUs and nodes. It integrates with major frameworks such as TensorFlow, Keras, and PyTorch.

Horovod’s core strength is its efficient use of collective communication, especially all-reduce, to average gradients across workers.

18. Horovod Programming Model

Horovod allows a training script to remain close to standard single-worker logic, while adding distributed behavior through a few key changes:

- initialize distributed context

- pin workers to devices

- wrap the optimizer with distributed logic

- broadcast initial parameters

- scale learning rate appropriately when needed

This makes Horovod attractive for teams that want to scale existing framework-native training code with minimal changes.

19. Horovod and Gradient Averaging

In Horovod-style synchronous training, each worker computes local gradient

gw, and all-reduce computes:

g = (1/W) Σw=1W gw.

Every worker then applies the same update and keeps parameters synchronized.

20. Horovod Strengths

- efficient all-reduce communication

- framework integrations with common DL stacks

- minimal modification path from single-worker code

- strong support for synchronous multi-GPU and multi-node training

21. Horovod Limitations

- primarily focused on distributed model training rather than general-purpose distributed application orchestration

- less broad than a full distributed computing platform for heterogeneous workflows

- best aligned with data-parallel deep learning rather than all distributed ML patterns

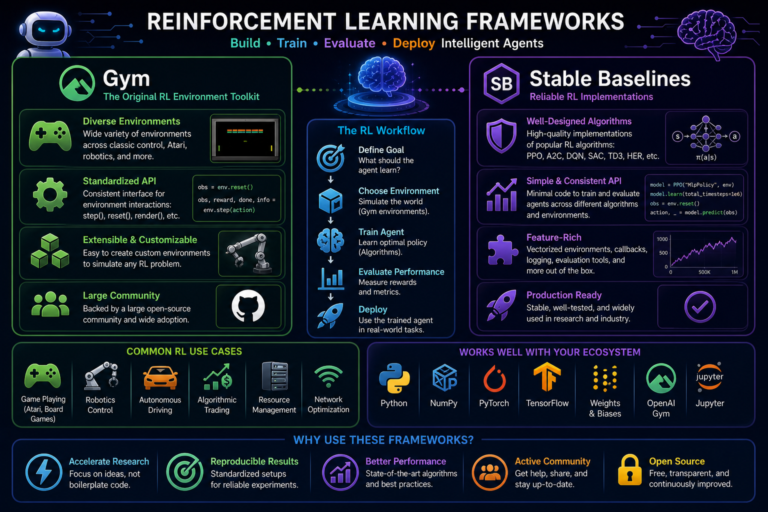

22. Ray Overview

Ray is a distributed computing framework for Python-oriented workloads that provides flexible primitives for distributed task execution, stateful actors, ML training, hyperparameter tuning, reinforcement learning, and serving.

Unlike Horovod, which is strongly centered on distributed deep learning synchronization, Ray is broader: it aims to provide a general distributed execution substrate for machine learning and AI systems.

23. Ray Task and Actor Model

Ray provides two central abstractions:

- tasks: stateless remote function execution

- actors: stateful remote workers with persistent state

This allows ML workflows to distribute not only training steps, but also data preparation, evaluation, search, orchestration, and serving pipelines.

24. Ray for Distributed Training

Ray can coordinate distributed training by launching workers, managing resources, handling communication patterns, and integrating with framework-level training logic. It is often used in larger end-to-end ML systems where training is only one part of a broader distributed workflow.

25. Ray Train, Tune, and Serve Perspective

Ray’s broader ecosystem supports:

- distributed training

- hyperparameter tuning

- distributed inference and serving

- reinforcement learning workloads

This makes it attractive for organizations that want a unified platform for many ML runtime patterns rather than only gradient synchronization.

26. Hyperparameter Search at Scale

Distributed computing is not only about speeding up one training run. It is also about running many experiments in

parallel. If there are candidate configurations

λ1, λ2, ..., λK,

hyperparameter tuning seeks:

λ* = argmaxλ Score(Train(λ)).

Ray is especially strong for this kind of task-parallel orchestration.

27. Distributed Data Processing

Scalable training also depends on scalable data input pipelines. If compute scales but data loading does not, GPUs may sit idle. Distributed systems must therefore coordinate:

- data sharding

- prefetching

- parallel decoding

- caching

- storage bandwidth

Effective training throughput is limited by the slowest major subsystem, including input pipelines.

28. Stragglers and Load Imbalance

In synchronous training, total step time is often determined by the slowest worker. If worker times are

T1, T2, ..., TW, then synchronous step time is close to:

Tsync ≈ max(T1, ..., TW).

Slow workers, known as stragglers, can severely reduce scaling efficiency.

29. Communication Compression and Optimization

Communication can be reduced through:

- gradient compression

- mixed precision

- sparser synchronization

- overlap of communication and computation

These techniques aim to reduce Tcomm without harming convergence too much.

30. Mixed Precision and Scaling

Mixed-precision training can accelerate distributed workloads by reducing memory and communication volume. If tensors are moved in lower precision while maintaining stable optimization through loss scaling or master weights, effective throughput can improve substantially on modern hardware.

31. Convergence Considerations

Faster wall-clock training does not guarantee identical optimization behavior. Changing worker count, batch size, and synchronization frequency can alter convergence. A scalable system should therefore monitor not only throughput but also:

- training loss stability

- validation metrics

- generalization gap

- gradient norm behavior

32. Horovod vs Ray

32.1 Horovod

Horovod is best understood as a specialized distributed deep learning tool emphasizing efficient gradient synchronization and framework-native scaling.

32.2 Ray

Ray is best understood as a broader distributed computing platform that can support distributed training, orchestration, tuning, serving, and other ML tasks.

32.3 Practical Distinction

If the main requirement is scaling synchronous deep learning with minimal code changes, Horovod is a natural fit. If the requirement includes distributed workflows across training, search, data handling, serving, and orchestration, Ray offers a broader platform approach.

33. Common Use Cases

Scalable distributed training is used for:

- large vision and NLP models

- foundation model pretraining and fine-tuning

- distributed reinforcement learning

- large-scale hyperparameter optimization

- multi-node feature and data processing

- time-constrained production retraining

34. Operational Considerations

Production-scale training systems must consider:

- cluster scheduling

- GPU availability

- cost per run

- checkpoint frequency

- artifact storage

- experiment lineage

- failure recovery

Scalable training is therefore both a systems problem and an ML optimization problem.

35. Strengths of Scalable Distributed Training

- reduces wall-clock training time

- enables training of larger models and datasets

- supports faster iteration and experimentation

- enables parallel hyperparameter search and workflow execution

- improves practical feasibility of industrial-scale ML

36. Limitations and Trade-Offs

- communication overhead can limit scaling

- larger batch sizes may alter optimization behavior

- infrastructure complexity increases significantly

- debugging distributed failures is harder than single-node debugging

- cost efficiency may degrade beyond certain scaling points

37. Best Practices

- Start by profiling the single-node bottleneck before distributing blindly.

- Use data parallelism first when the model fits comfortably on each device.

- Measure both speedup and convergence quality, not speed alone.

- Watch communication overhead and straggler effects as worker count grows.

- Use Horovod when efficient synchronous deep learning scaling is the main goal.

- Use Ray when the broader need includes training, tuning, orchestration, and distributed ML workflows.

- Checkpoint regularly and design for failure recovery from the start.

38. Conclusion

Scalable training is a core requirement for modern machine learning because single-device execution often cannot meet the needs of large datasets, large models, or rapid experimentation cycles. Distributed computing addresses this by decomposing training into parallelizable components such as data processing, gradient computation, parameter synchronization, and orchestration.

Horovod and Ray represent two important approaches within this space. Horovod focuses on efficient synchronous distributed deep learning, especially through all-reduce-based data parallelism. Ray offers a broader distributed computing framework for training, tuning, serving, and workflow coordination. Understanding scalable training therefore requires understanding not just ML algorithms, but also communication patterns, systems bottlenecks, synchronization trade-offs, and operational design at cluster scale.