Serverless machine learning refers to managed execution patterns in which cloud platforms abstract away much of the infrastructure required to train, deploy, scale, and operate machine learning workloads. Instead of provisioning and managing servers, clusters, autoscaling rules, and patching lifecycles directly, practitioners define workloads, artifacts, and configurations while the platform manages the underlying compute orchestration. This whitepaper explains the technical foundations, trade-offs, and operational implications of serverless ML, with focus on AWS SageMaker and Google AI Platform style managed services.

Abstract

Machine learning systems often require significant infrastructure management across experimentation, data processing, training, tuning, model packaging, endpoint deployment, monitoring, and retraining. Serverless ML platforms aim to reduce this burden by offering managed capabilities such as notebook environments, training jobs, hyperparameter tuning, model registries, batch predictions, real-time endpoints, autoscaling, pipelines, and monitoring without requiring teams to directly manage most of the servers involved. This paper explains what “serverless” means in the ML context, where it differs from traditional serverless compute, and how managed ML platforms support scalable model development and deployment. It covers workload abstraction, event-driven and managed-job execution, endpoint lifecycle, autoscaling, pricing considerations, cold starts, observability, vendor-managed training and inference, and practical decision criteria. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Let a machine learning workflow be represented as:

W = (data, features, training, evaluation, artifact, deployment, monitoring).

In a self-managed environment, each component of W may require user-managed

infrastructure such as:

- VM provisioning

- container orchestration

- network configuration

- autoscaling policies

- storage setup

- identity and access controls

- logging and monitoring plumbing

Serverless ML aims to abstract much of this infrastructure burden so that users interact more directly with ML primitives instead of raw infrastructure primitives.

2. What “Serverless” Means in ML

In the strictest cloud-computing sense, serverless means the user does not manage servers directly and pays mainly for workload execution or provisioned capability rather than owning long-lived infrastructure.

In ML, serverless is often broader and usually means:

- managed training jobs rather than manually managed clusters

- managed online endpoints with automatic scaling

- managed batch prediction jobs

- integrated experiment, registry, and deployment workflows

- reduced operational responsibility for patching and infrastructure lifecycle

It does not necessarily mean “no compute cost” or “instant scaling without trade-offs.” The platform still runs on servers; it simply manages them on the user’s behalf.

3. Why Serverless ML Is Attractive

Serverless ML is attractive because many teams want ML capability without becoming full-time infrastructure operators. Benefits often include:

- faster setup

- reduced DevOps burden

- integrated ML tooling

- managed autoscaling

- shorter path from experimentation to deployment

- better alignment with small or medium-sized platform teams

This is especially valuable when time-to-value matters more than custom infrastructure control.

4. ML Lifecycle Components Often Managed by Serverless Platforms

A mature managed ML platform may offer services for:

- data preparation jobs

- notebook environments

- training jobs

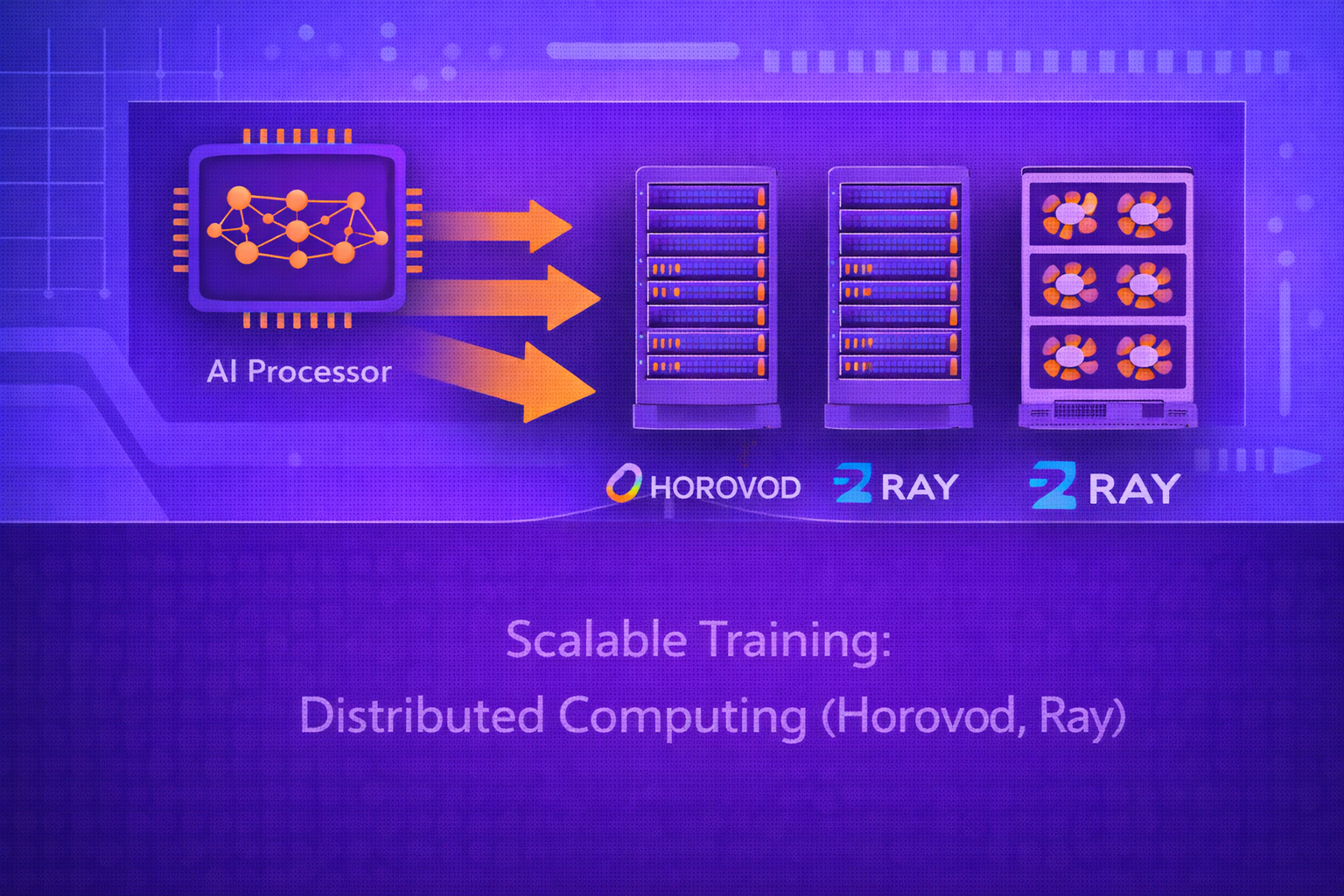

- distributed training

- hyperparameter tuning

- model packaging and registry

- real-time inference endpoints

- batch prediction

- pipelines and orchestration

- monitoring and drift detection

5. Managed Training Jobs

One of the most important serverless ML abstractions is the managed training job. Instead of provisioning compute nodes and launching containers manually, the user submits:

- training code or container

- input data locations

- hyperparameters

- resource requirements

- output artifact destinations

Conceptually, the platform executes:

M = Train(D, φ, λ, E)

while managing the lifecycle of the underlying infrastructure automatically.

6. Managed Inference Endpoints

Serverless ML platforms often provide managed online serving endpoints. The user registers a model artifact and deployment configuration, and the platform handles:

- endpoint provisioning

- container startup

- request routing

- autoscaling

- health checks

- metrics and logs

The serving interface typically computes:

ŷ = f(x; θ)

for incoming requests x.

7. Batch Prediction as a Managed Primitive

Not all inference is online. Many business workflows rely on batch scoring. In serverless ML, batch prediction can

be expressed as a managed job over dataset D:

Ŷ = {f(xi; θ)}i=1N.

The platform orchestrates resource allocation, parallel execution, output storage, retries, and cleanup.

8. Autoscaling in Serverless ML

A major value proposition of serverless ML is managed scaling. If request load at time

t is λt, then the platform attempts to adjust

active serving capacity ct so that:

ct+1 = g(λt, latency target, policy).

This reduces the need for manual capacity planning, though scaling behavior still depends on platform limits, provisioning delays, and traffic patterns.

9. Cold Starts

One trade-off in many serverless systems is cold-start latency. If no active compute instance is currently serving a particular workload, the platform may need to initialize containers or runtimes before responding.

If warm request latency is Lwarm and cold-start overhead is

Δcold, then a cold invocation may experience:

Lcold = Lwarm + Δcold.

This is a key consideration for latency-sensitive ML inference.

10. Cost Model

Serverless ML pricing typically depends on one or more of:

- training job duration

- instance class or accelerator type

- endpoint uptime or provisioned concurrency

- request count

- memory or compute allocation

- data storage and transfer

A simplified cost form for inference might be:

Cost = Cbase + Crequests + Ccompute-time + Cstorage.

Serverless can lower cost for bursty workloads but may be less economical for steady, heavy, predictable workloads.

11. AWS SageMaker as a Managed ML Platform

AWS SageMaker is a managed machine learning platform that supports notebook environments, training jobs, hyperparameter tuning, model hosting, pipelines, monitoring, and registry-style lifecycle workflows. It is designed to reduce the infrastructure burden associated with building and deploying ML models on AWS.

11.1 Training in SageMaker

A SageMaker-style training workflow typically specifies:

- training container or built-in algorithm

- data input channels

- instance type and count

- hyperparameters

- output model location

The service then provisions, runs, logs, stores, and tears down the underlying training infrastructure.

11.2 Hosting in SageMaker

SageMaker supports managed model hosting for real-time inference, batch transform style workflows, and other serving patterns. From an operational perspective, this means users can deploy model artifacts as endpoints without manually building the full serving platform from scratch.

11.3 Pipelines and Registry-Like Workflows

SageMaker also supports orchestration patterns for model training, evaluation, registration, and approval. This is important because serverless ML is most useful when deployment is integrated into broader MLOps workflows rather than being only a one-off endpoint creation mechanism.

12. Google AI Platform / Vertex-Style Managed ML

Google AI Platform, and later Vertex-style managed ML services, provide analogous abstractions for model training, tuning, deployment, pipelines, and managed prediction on Google Cloud infrastructure.

The core idea is similar: users define ML workloads while the platform manages much of the underlying infrastructure, resource provisioning, orchestration, and service integration.

12.1 Managed Training

Managed training services let users submit training jobs with data references, code or container definitions, and hardware configurations such as CPU, GPU, or accelerator types.

12.2 Managed Prediction

Managed prediction services provide online or batch inference, autoscaling, traffic splitting, and monitoring integrations. This supports serverless or semi-serverless deployment workflows for models already packaged and validated.

13. Managed Notebooks and Interactive Development

Many serverless ML platforms also provide managed notebook environments. While notebooks themselves are not always truly “serverless” in the strictest sense, they reduce user responsibility for environment setup, dependency installation, and workspace lifecycle management.

This shortens the path from experimentation to managed training or managed deployment.

14. Hyperparameter Tuning as a Managed Service

Hyperparameter optimization is another area where serverless ML platforms provide strong value. If candidate

configurations are:

λ1, λ2, ..., λK,

then tuning seeks:

λ* = argmaxλ Score(Train(λ)).

Managed tuning services launch, monitor, compare, and terminate many trial jobs automatically, reducing orchestration burden for search-heavy workloads.

15. Pipelines and Orchestration

Serverless ML becomes operationally powerful when combined with pipeline orchestration. A pipeline may define:

T1 → T2 → T3 → ... → Tn,

where tasks include preprocessing, training, evaluation, registration, and deployment.

Managed pipeline systems track dependencies, retries, metadata, and step outputs while reducing manual job coordination.

16. Model Registry and Promotion

Mature serverless ML systems often include or integrate with model registry concepts such as:

- model versions

- candidate models

- approved models

- staging vs production lifecycle states

This matters because serverless ML is not only about execution abstraction. It is also about controlled lifecycle management for models over time.

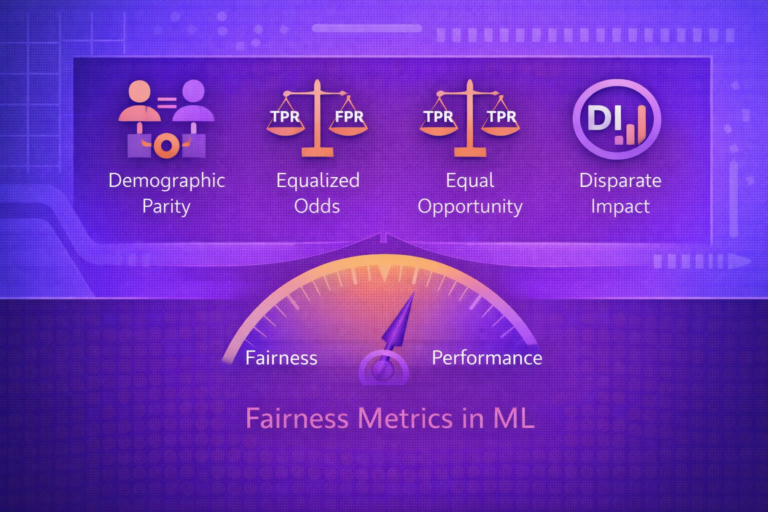

17. Monitoring and Drift Detection

Managed ML services often include hooks or built-in support for:

- request logging

- latency monitoring

- error rate monitoring

- input drift detection

- prediction distribution monitoring

If training input distribution is Ptrain(x) and production distribution is

Pprod(x), monitoring aims to detect when:

Ptrain(x) ≠ Pprod(x).

18. Serverless ML vs Self-Managed Kubernetes-Based ML

A useful practical comparison is between serverless or managed ML and self-managed container-orchestrated ML.

18.1 Serverless ML Advantages

- less infrastructure setup

- faster onboarding

- integrated managed tooling

- lower operational burden

18.2 Self-Managed Advantages

- greater customization

- deeper control over networking and runtime

- potential cost optimization at high steady-state scale

- more flexibility across specialized workloads

19. Serverless ML vs Function-as-a-Service

Serverless ML should not be confused with generic Function-as-a-Service (FaaS) patterns such as very short-lived stateless functions. ML workloads may require:

- large model files

- GPU access

- long-running training jobs

- stateful caches

- batch execution windows

Therefore, ML serverless platforms are usually more specialized and heavier-weight than generic short-function serverless runtimes.

20. Suitability for Different Workloads

Serverless ML works especially well for:

- teams wanting fast managed deployment

- bursty inference traffic

- moderate-scale retraining workflows

- platform-constrained organizations

- rapid prototyping and managed MLOps integration

It may be less ideal when workloads require:

- extreme infrastructure customization

- very steady heavy load where self-managed capacity is cheaper

- specialized networking or hardware scheduling constraints

- cross-cloud or highly portable neutral infrastructure requirements

21. Security and Governance

Managed platforms reduce some operational burden, but security responsibilities remain important. Teams must still manage:

- identity and access permissions

- artifact access control

- data governance

- encryption policies

- auditability

- compliance requirements

Serverless does not remove governance responsibility; it shifts some infrastructure operations to the provider.

22. Vendor Lock-In Considerations

One trade-off of serverless ML is tighter alignment with provider-specific APIs, artifacts, and workflow semantics. This can improve productivity but may increase migration difficulty later.

The tighter the workflow depends on platform-native abstractions, the harder it may be to move the same system across clouds or to self-managed environments.

23. Observability and Debugging Trade-Offs

Because infrastructure is abstracted, users may gain convenience but lose some low-level visibility. Debugging may rely more heavily on platform logs, traces, and service-level metrics rather than direct machine-level inspection.

This is often acceptable, but it can be limiting for advanced performance tuning or unusual failure scenarios.

24. Reliability and SLA Considerations

Managed services often improve baseline reliability because the provider handles many operational concerns. However, teams must still understand:

- service quotas

- regional availability

- autoscaling limits

- cold-start behavior

- latency variability

Reliability in serverless ML is therefore partly inherited and partly still application-specific.

25. Strengths of Serverless ML

- reduced infrastructure management

- faster time to deployment

- managed autoscaling and lifecycle control

- integrated training, tuning, registry, and serving features

- strong fit for teams prioritizing productivity and managed operations

26. Limitations of Serverless ML

- less infrastructure control

- possible cold-start latency

- pricing may be unfavorable for sustained heavy workloads

- vendor-specific abstractions can increase lock-in

- advanced customization may be harder than in self-managed environments

27. Best Practices

- Use serverless ML when speed, managed operations, and integrated tooling matter more than deep infrastructure control.

- Measure both latency and cost under realistic traffic patterns before committing to a serving mode.

- Design deployment workflows around model registry, evaluation gates, and rollback, not just endpoint creation.

- Use managed batch jobs for large offline scoring workloads when online endpoints are unnecessary.

- Monitor drift, latency, and failure behavior continuously after deployment.

- Be explicit about portability requirements before deeply adopting provider-specific workflow abstractions.

28. Conclusion

Serverless ML represents an important shift in how organizations build and operate machine learning systems. By abstracting away much of the underlying infrastructure for training, inference, scaling, and orchestration, managed platforms such as AWS SageMaker and Google AI Platform style services allow teams to focus more directly on data, models, and business outcomes.

However, serverless ML is not magic infrastructure. It introduces its own trade-offs in cost dynamics, observability, customization, and vendor dependency. Understanding serverless ML therefore requires understanding both what the platform abstracts and what responsibilities remain with the ML team. When used appropriately, serverless ML can dramatically accelerate the path from experimentation to reliable, production-grade machine learning.