Bias in AI refers to systematic and undesirable patterns in data, models, decision rules, or deployment contexts that produce unfair, distorted, or harmful outcomes for individuals or groups. Identifying and mitigating bias is not a single algorithmic fix, but a full lifecycle discipline spanning problem framing, data collection, labeling, feature engineering, training, evaluation, deployment, and governance. This whitepaper explains the technical foundations of bias in AI and the principal methods used to detect and reduce it.

Abstract

AI systems are increasingly used in domains such as hiring, lending, healthcare, fraud detection, education, criminal justice, content moderation, and customer service. In these settings, biased systems can amplify historical inequities, produce disparate impact, degrade trust, and create regulatory or ethical risk. Bias can enter AI systems through many pathways: sampling bias, measurement bias, label bias, historical bias, proxy features, objective-function mismatch, annotation bias, feedback loops, and distribution shift. This paper explains the sources of bias, the distinction between fairness and accuracy, and the major quantitative fairness metrics used in practice, such as demographic parity, equalized odds, equal opportunity, calibration, and disparate impact. It also covers pre-processing, in-processing, and post-processing mitigation techniques, monitoring, governance, and the practical trade-offs that arise when fairness goals conflict. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Let a machine learning system produce prediction

ŷ = f(x),

where x is an input feature vector and

ŷ is the model output. Let

A denote a sensitive attribute such as group membership. Bias concerns arise when the

behavior of f differs systematically across values of

A in a way that is unjustified, harmful, or inconsistent with policy goals.

Bias in AI is therefore not merely a statistical oddity. It is a socio-technical problem in which mathematical systems interact with human institutions, data collection processes, and deployment incentives.

2. What Bias Means in AI

In broad terms, AI bias means that model behavior is systematically skewed in ways that disadvantage certain groups, misrepresent reality, or reinforce undesirable structural patterns. Bias may appear as:

- unequal error rates across groups

- underrepresentation of certain populations

- predictive instability for minority groups

- disproportionate negative decisions

- stereotyped or harmful outputs in generative systems

Not every statistical difference is automatically unfair, but important differences should be investigated rather than ignored.

3. Fairness vs Bias

Bias and fairness are related but not identical. Bias describes problematic skew or systematic distortion. Fairness concerns the normative criteria used to judge whether outcomes or decision processes are acceptable.

Because fairness is partly normative, technical fairness definitions are not universally interchangeable. Choosing a fairness criterion requires domain judgment, policy context, and stakeholder input.

4. Sources of Bias

Bias can enter an AI system at multiple stages:

- problem formulation bias

- sampling bias

- measurement bias

- label bias

- historical bias

- feature bias and proxy bias

- optimization bias

- deployment bias

- feedback-loop bias

5. Historical Bias

Historical bias occurs when the underlying world reflected in the data already contains structural inequality or unjust historical decisions. Even if the data is accurately measured, a model trained on it may reproduce those patterns.

If historical labels y reflect discriminatory past decisions, then fitting

f(x) ≈ y can reproduce that discrimination instead of correcting it.

6. Sampling Bias

Sampling bias occurs when the training dataset is not representative of the intended deployment population. If the

training distribution is Ptrain(x, y) and the deployment distribution is

Pdeploy(x, y), then a major source of bias may be:

Ptrain(x, y) ≠ Pdeploy(x, y).

Underrepresentation of certain groups can lead to systematically weaker model performance for them.

7. Measurement Bias

Measurement bias arises when features or labels do not measure the intended construct equally well across groups. For example, a proxy variable may have different meaning or reliability across populations.

If observed feature x̃ is a noisy proxy for true construct

x, then:

x̃ = x + ε.

If the noise term ε differs systematically by group, then measurement bias may follow.

8. Label Bias

Label bias occurs when the target variable itself is biased. A model may be mathematically accurate with respect to the label and still be socially harmful if the label encodes biased decisions or incomplete truth.

For example, an arrest label is not the same as a true crime rate, and a past hiring decision is not the same as true candidate quality.

9. Proxy Bias

Sensitive attributes are not always used explicitly, but related variables may function as proxies. If feature

z is strongly correlated with sensitive attribute

A, then even if A is removed, the model may still infer

group membership indirectly.

This is one reason why “fairness through unawareness” is often insufficient.

10. Feedback Loops

Bias can be amplified after deployment through feedback loops. If a model influences which examples are later observed or labeled, future training data may become increasingly distorted.

For example, if a model flags certain groups more often for review, then more labels are collected from those groups, reinforcing the system’s prior assumptions.

11. Problem Formulation Bias

Bias can originate even before model training if the prediction problem is framed poorly. Choosing the wrong target, the wrong objective, or the wrong optimization metric can create harmful behavior even if the algorithm is working exactly as designed.

Therefore, fairness begins with asking whether the prediction task itself is appropriate and legitimate.

12. Accuracy Does Not Guarantee Fairness

A model can have strong overall accuracy and still perform poorly for a minority group. Overall classification

accuracy is:

Accuracy = (TP + TN)/(TP + TN + FP + FN).

But this aggregate metric can hide subgroup disparities if most data comes from one dominant group.

13. Group Fairness Metrics

Many technical fairness definitions compare behavior across groups defined by sensitive attribute

A.

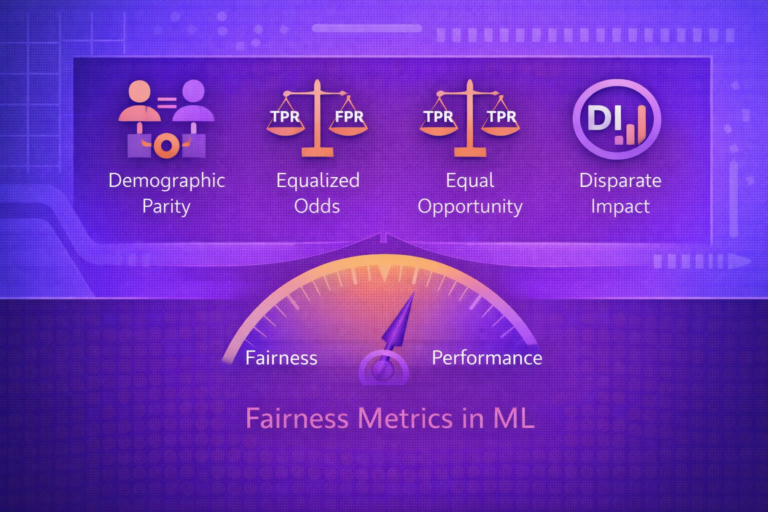

13.1 Demographic Parity

Demographic parity requires equal positive prediction rates across groups:

P(ŷ = 1 | A = a) = P(ŷ = 1 | A = b).

This criterion focuses on parity in outcomes, not parity in error rates or true label alignment.

13.2 Disparate Impact Ratio

A related quantity is the disparate impact ratio:

DI = P(ŷ = 1 | A = a) / P(ŷ = 1 | A = b).

Ratios substantially below 1 may indicate disproportionate adverse impact, though interpretation depends on domain and policy context.

13.3 Equal Opportunity

Equal opportunity requires equal true positive rates across groups:

P(ŷ = 1 | Y = 1, A = a) = P(ŷ = 1 | Y = 1, A = b).

This focuses on giving qualified or truly positive cases comparable opportunity across groups.

13.4 Equalized Odds

Equalized odds requires both equal true positive rates and equal false positive rates across groups:

P(ŷ = 1 | Y = y, A = a) = P(ŷ = 1 | Y = y, A = b)

for both y = 0 and y = 1.

13.5 Calibration Within Groups

Calibration requires that predicted scores mean the same thing across groups. If a score

s represents estimated probability, then calibration within groups requires:

P(Y = 1 | S = s, A = a) = s

and similarly for other groups.

14. Tensions Between Fairness Criteria

Different fairness metrics cannot always be satisfied simultaneously, especially when base rates differ across

groups. For example, if

P(Y = 1 | A = a) ≠ P(Y = 1 | A = b),

then demographic parity, equalized odds, and calibration may conflict.

This means fairness is not a single checkbox metric. Trade-offs must be discussed explicitly.

15. Error Rate Analysis by Group

Bias analysis often begins by comparing group-specific error metrics:

FPR = FP / (FP + TN),

FNR = FN / (FN + TP),

TPR = TP / (TP + FN),

and

PPV = TP / (TP + FP).

If these vary significantly by group, the system may be imposing unequal burdens or unequal benefits.

16. Bias in Generative AI

In generative systems, bias may appear as:

- stereotyped completions

- unequal toxicity or refusal behavior

- skewed representation of professions, identities, or cultures

- harmful image generation patterns

Bias detection in generative AI often requires benchmark prompts, output audits, adversarial prompting, and human evaluation rather than only classic classification metrics.

17. Identifying Bias in Practice

Practical bias identification usually combines:

- dataset audits

- representation analysis

- label quality review

- subgroup evaluation metrics

- counterfactual and sensitivity analysis

- human review and domain expertise

No single metric is sufficient by itself.

18. Dataset Auditing

Before model training, teams should inspect:

- group representation counts

- missingness by group

- label frequency by group

- feature quality by group

- known historical or operational distortions

If group proportion is

P(A = a),

severe imbalance may signal representational risk.

19. Pre-Processing Mitigation

Pre-processing methods attempt to reduce bias before training. Common techniques include:

- re-sampling underrepresented groups

- reweighting examples

- repairing labels or features

- learning fair representations

- removing or transforming problematic proxies

19.1 Reweighting

One simple mitigation assigns example weights

wi so that underrepresented or disadvantaged groups contribute more to the

loss:

L = Σi=1n wi ℓ(f(xi), yi).

20. In-Processing Mitigation

In-processing methods modify the learning algorithm itself to incorporate fairness constraints or penalties during optimization.

A general constrained form may be:

minθ L(θ) subject to FairnessViolation(θ) ≤ ε.

Alternatively, one may optimize a penalized objective:

minθ L(θ) + λ Ωfair(θ),

where Ωfair penalizes unfairness.

21. Adversarial Debiasing

One in-processing strategy is adversarial debiasing. The predictor tries to perform the task well, while an adversary tries to infer the sensitive attribute from the model representation or output. The predictor is trained to reduce that inferability.

Conceptually:

min PredictorLoss - λ · AdversaryLoss.

This encourages learned representations that are less predictive of sensitive group membership.

22. Post-Processing Mitigation

Post-processing methods adjust model outputs after training. Common approaches include:

- threshold adjustment by group

- score calibration by subgroup

- decision rule optimization under fairness constraints

For example, if decision rule is:

ŷ = 1 if s ≥ τ,

one may choose different thresholds τa and

τb to better align error rates across groups.

23. Counterfactual Fairness Idea

A counterfactual view asks whether a decision would remain the same if the sensitive attribute were changed while all

else relevant stayed comparable. Conceptually, a predictor is counterfactually fair if:

f(X, A = a) = f(X, A = b)

under an appropriate counterfactual model of the world.

This is conceptually powerful but often difficult to implement because it requires strong causal assumptions.

24. Proxy Removal Is Not Enough

Simply removing a sensitive variable does not guarantee fairness. If other variables are correlated with it, the model may reconstruct similar group distinctions. Therefore, fairness requires deeper analysis than variable omission alone.

25. Monitoring Bias After Deployment

Bias mitigation is not finished at training time. Production monitoring should track group-specific behavior over time. If deployment distribution changes from the training distribution, fairness properties may also change.

If production group-specific metric is

Mg(t),

then monitoring should detect when:

Mg(t)

diverges materially across groups or from training-time expectations.

26. Fairness and Business Objectives

Fairness must be aligned with the real use case. A model that optimizes only overall profit, throughput, or raw accuracy may create unacceptable harm. Fairness-aware AI therefore often requires multi-objective reasoning that balances utility and equity.

A general trade-off formulation may look like:

Objective = Utility - λ · Harm

or

Objective = Performance - λ · FairnessViolation.

27. Documentation and Governance

Effective bias mitigation requires documentation such as:

- data provenance

- intended use and excluded use

- known limitations

- subgroup evaluation results

- mitigation decisions and trade-offs

- monitoring and escalation plans

Governance is necessary because fairness decisions are not purely technical; they often carry legal and ethical consequences.

28. Human Oversight

In high-stakes systems, human review may be necessary for:

- appeals and contestability

- borderline cases

- fairness investigations

- deployment approval

Human oversight is not a substitute for technical fairness work, but it is often an essential complement.

29. Common Pitfalls

- treating fairness as only a post-training metric problem

- assuming removing sensitive attributes solves bias

- evaluating only aggregate accuracy

- ignoring label bias and problem formulation bias

- using one fairness metric without considering trade-offs

- failing to monitor fairness after deployment

30. Strengths of a Bias-Aware AI Process

- improves trust and accountability

- reduces risk of harmful disparities

- supports regulatory and governance readiness

- reveals hidden weaknesses in datasets and models

- improves robustness for underrepresented populations

31. Limitations and Trade-Offs

- fairness goals may conflict with each other

- some bias cannot be solved purely algorithmically

- sensitive attribute access may be limited by policy or law

- mitigation can reduce some kinds of performance

- deployment context can reintroduce bias even after mitigation

32. Best Practices

- Start bias analysis at problem formulation, not only after training.

- Audit data representation, label quality, and feature proxies before modeling.

- Evaluate models by subgroup, not just in aggregate.

- Select fairness metrics that fit the domain and decision stakes.

- Use pre-processing, in-processing, and post-processing tools as complementary options.

- Document trade-offs and governance decisions explicitly.

- Monitor fairness continuously after deployment.

33. Conclusion

Identifying and mitigating bias in AI is one of the central challenges in trustworthy machine learning because model behavior is shaped by data, labels, objectives, and deployment context—not by algorithms alone. Bias can emerge from historical inequity, measurement error, underrepresentation, proxy variables, or feedback loops, and it can persist even when a model appears statistically accurate overall.

A serious approach to bias mitigation therefore requires end-to-end discipline: careful problem framing, dataset auditing, subgroup evaluation, fairness-aware optimization, post-processing where appropriate, documentation, governance, and post-deployment monitoring. The goal is not to eliminate all social disagreement through a single formula, but to build AI systems whose behavior is more transparent, more equitable, and more accountable in the contexts where they are used.