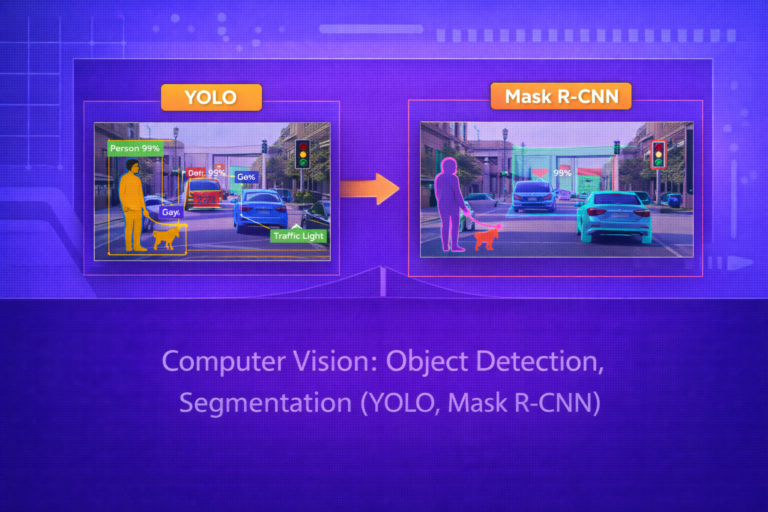

Computer Vision: Object Detection, Segmentation (YOLO, Mask R-CNN)

Object detection and segmentation are core computer vision tasks that move beyond simple image classification by asking not only what is in an image, but where it is and, in segmentation, which pixels belong to each object or class. This…