Responsible AI is the discipline of designing, building, evaluating, deploying, and governing AI systems so that they are safe, fair, reliable, transparent, privacy-aware, secure, accountable, and aligned with human values and legitimate institutional goals. Responsible AI is not a single feature or compliance checkbox. It is an end-to-end engineering, governance, and risk management practice embedded throughout the AI lifecycle.

Abstract

As AI systems increasingly influence decisions in finance, healthcare, employment, education, public services, enterprise automation, search, recommendation, and generative interfaces, the need for responsible design and operation has become central to trustworthy deployment. AI systems can fail in many ways: biased outputs, privacy leakage, unsafe recommendations, hallucinations, brittle behavior under distribution shift, opaque decision logic, misuse by downstream actors, and weak accountability when incidents occur. Responsible AI practices aim to reduce these risks through structured processes that span problem framing, data governance, model development, evaluation, documentation, deployment controls, monitoring, human oversight, and organizational governance. This paper explains the technical foundations and operational components of responsible AI practices, including fairness, transparency, explainability, privacy, safety, robustness, security, accountability, human-in-the-loop design, lifecycle monitoring, incident response, and policy alignment. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Let an AI system be represented as:

S = (D, φ, M, P, U, G),

where:

Dis the data and data governance contextφis the transformation and feature pipelineMis the model or policyPis the deployment process and product contextUis the user and usage environmentGis the governance and oversight structure

Responsible AI practices concern the behavior and control of the whole system

S, not just the model M in isolation.

2. Why Responsible AI Matters

AI systems do not operate in a vacuum. They influence people, workflows, institutions, and incentives. A technically accurate model can still be irresponsible if it:

- creates discriminatory impact

- leaks sensitive information

- produces unsafe or misleading outputs

- cannot be explained or contested in high-stakes use

- fails unpredictably under real deployment conditions

- lacks clear ownership when incidents occur

Responsible AI matters because AI systems are socio-technical systems whose risks emerge from interactions among data, models, users, product design, and organizational processes.

3. Core Principles of Responsible AI

Although terminology varies across organizations, responsible AI usually includes principles such as:

- fairness

- reliability and robustness

- safety

- privacy and data governance

- transparency and explainability

- security and misuse resistance

- accountability and human oversight

These are not independent boxes. They often interact and must be balanced together.

4. Responsible AI as Lifecycle Practice

Responsible AI must be applied across the full lifecycle:

- problem selection

- data collection and labeling

- training and evaluation

- deployment design

- user experience and controls

- monitoring and incident response

- retirement and archival decisions

A common failure mode is treating responsibility as only a post-training audit. In practice, many risks are locked in much earlier.

5. Problem Framing and Use-Case Legitimacy

Responsible AI begins by asking whether a problem should be automated at all. Some predictive tasks may be technically possible but normatively inappropriate. A useful question is whether the target variable and decision context are legitimate, contestable, and aligned with human values and institutional responsibilities.

If a model optimizes objective

J(M), then responsible design requires asking whether

J(M) itself reflects the right goal.

6. Risk-Based AI Classification

Not all AI systems carry the same risk. Responsible AI programs often classify use cases by impact level. High-stakes systems typically include those affecting:

- health and safety

- employment

- credit and financial access

- education opportunity

- housing

- legal or government decisions

- critical infrastructure or public trust

The higher the risk, the stronger the requirements for documentation, testing, oversight, and approval.

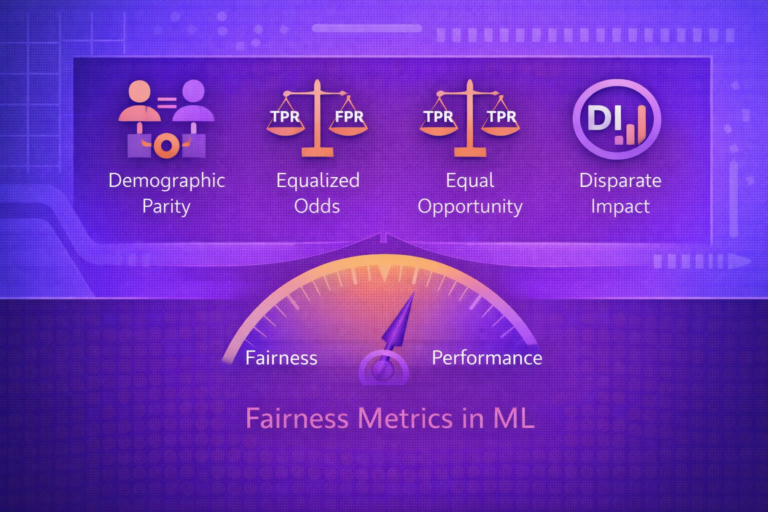

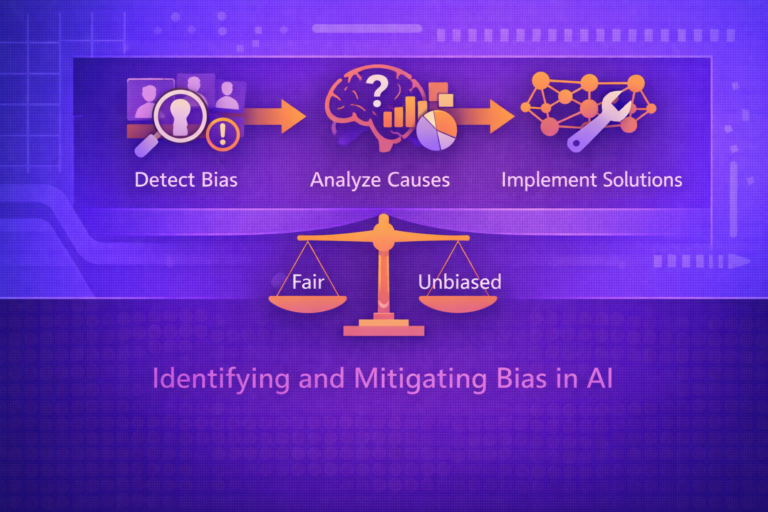

7. Fairness as a Responsible AI Component

Fairness concerns whether system behavior differs across groups in problematic ways. If

A is a sensitive attribute and ŷ is a decision outcome,

one may compare rates such as:

P(ŷ = 1 | A = a)

and

P(ŷ = 1 | A = b).

Responsible AI practice requires choosing fairness criteria appropriate to the domain and evaluating group-specific outcomes, not only overall accuracy.

8. Data Governance and Data Quality

Responsible AI depends heavily on responsible data management. Data governance includes:

- data provenance

- consent and lawful use

- retention limits

- quality controls

- access restrictions

- lineage and auditability

If data quality is denoted by q(D), then trustworthy deployment often requires

maintaining:

q(D) ≥ τ,

where τ is a minimum acceptable threshold for completeness, validity, and reliability.

9. Privacy-Aware AI Practices

Responsible AI requires protecting personal and sensitive data. Privacy-aware practices include:

- data minimization

- purpose limitation

- anonymization or pseudonymization where appropriate

- access control and encryption

- privacy-preserving analytics or learning techniques

In some settings, privacy risk may be described as limiting how much a model reveals about any single individual or sensitive training record.

10. Transparency

Transparency means that stakeholders can understand what the system is, what it does, where it is used, what data it relies on, and what limitations it has. Transparency is broader than explainability. It includes system-level disclosure and documentation, not only local model reasoning.

11. Explainability

Explainability concerns how model behavior can be interpreted or communicated. Depending on the context, teams may need:

- global explanation of model behavior

- local explanations for specific predictions

- counterfactual explanations

- feature attribution analysis

If a model output is ŷ = f(x), an explanation mechanism may seek to approximate how

changes in components of x affect ŷ.

12. Reliability and Robustness

A responsible AI system should behave consistently under realistic variation in input conditions. Robustness concerns whether the model remains dependable when inputs are noisy, incomplete, shifted, or adversarially perturbed.

If deployment distribution is Pprod(x) and training distribution is

Ptrain(x), responsible practice must consider what happens when:

Pprod(x) ≠ Ptrain(x).

13. Safety

Safety concerns whether the system can generate harmful outputs, induce dangerous actions, or fail in ways that produce unacceptable consequences. Safety is especially important in domains involving:

- medical advice

- industrial control

- autonomous systems

- public information and decision support

- cybersecurity guidance

Responsible AI practices should define unacceptable failure modes and establish safeguards to reduce their likelihood and impact.

14. Security and Misuse Resistance

AI systems can be attacked or misused. Responsible practice includes defenses against:

- prompt injection

- data poisoning

- model extraction

- adversarial examples

- credential abuse

- unsafe automation of harmful tasks

Security is therefore part of responsible AI, not a separate concern.

15. Human-in-the-Loop Design

Many responsible AI systems require meaningful human oversight. This may include:

- review of high-risk outputs

- approval gates before action execution

- appeal or contest mechanisms

- override capabilities

- escalation to human experts in uncertain cases

Human oversight is valuable only if humans have the information, authority, and workflow capacity to intervene effectively.

16. Uncertainty Awareness

Responsible systems should acknowledge uncertainty rather than always presenting outputs as certain. If a model output

is a score or probability s, users and downstream systems should understand whether

s represents confidence, calibrated probability, or merely ranking signal.

In some contexts, responsible design requires abstention or escalation when uncertainty exceeds a threshold:

if Uncertainty(x) > τ, then defer.

17. Documentation Practices

Responsible AI relies heavily on documentation such as:

- dataset documentation

- model documentation

- intended and prohibited use cases

- evaluation results by subgroup

- known limitations and risks

- incident response contacts and processes

Documentation creates accountability and makes operational decisions auditable.

18. Evaluation Beyond Accuracy

Responsible AI evaluation should extend beyond standard predictive metrics. In classification settings, teams may

compute:

Accuracy = (TP + TN)/(TP + TN + FP + FN),

Precision = TP/(TP + FP),

Recall = TP/(TP + FN),

and

F1 = 2(Precision × Recall)/(Precision + Recall).

But responsible evaluation should also include fairness, calibration, robustness, latency, privacy, and failure-mode testing where relevant.

19. Red Teaming and Adversarial Evaluation

Responsible AI practice often includes structured adversarial testing, or red teaming, to identify unsafe, biased, deceptive, or brittle behavior before and after deployment. This is particularly important for generative and interactive systems where many harmful failure modes are not captured by ordinary benchmark datasets.

20. Deployment Controls

Responsible deployment often includes operational safeguards such as:

- feature flags

- gradual rollouts

- canary release patterns

- rate limits

- restricted access

- fallback behavior

These controls reduce the blast radius of failures and support safer experimentation.

21. Monitoring and Post-Deployment Governance

Responsible AI does not end at launch. Production monitoring should track:

- data drift

- prediction drift

- group disparities

- safety incidents

- misuse attempts

- latency and reliability problems

- business and user-impact metrics

If a monitored metric is M(t), then governance should define what to do when

M(t)

exceeds or falls below policy thresholds.

22. Incident Response

Responsible AI programs should define incident response processes for cases such as:

- harmful model outputs

- data leakage

- unexpected bias patterns

- unsafe automation

- security compromise

Incident handling should include logging, triage, rollback capability, stakeholder notification, and post-incident analysis.

23. Accountability and Ownership

Responsible AI requires clear ownership. Teams should know who is accountable for:

- data quality

- model performance

- fairness review

- privacy review

- deployment approval

- incident response

Without defined ownership, responsibility becomes diffuse and failures become harder to manage.

24. Multi-Disciplinary Review

Responsible AI usually requires collaboration across:

- machine learning engineers

- data scientists

- product teams

- security and privacy specialists

- domain experts

- legal or policy stakeholders

- risk and compliance teams

This is necessary because many AI risks are cross-functional rather than purely technical.

25. Responsible AI for Generative Systems

Generative AI introduces additional concerns such as:

- hallucinations

- harmful content generation

- style imitation and IP concerns

- prompt injection and tool misuse

- inconsistent refusal behavior

- fabricated citations or facts

Responsible AI practices for generative systems often require layered safeguards, prompt and tool controls, output filtering, red teaming, and user-facing disclosure of limitations.

26. Responsible AI Metrics and Thresholds

Organizations often operationalize responsibility with measurable thresholds. A deployment gate may require something

like:

RiskScore(S) ≤ τ,

where τ is the acceptable risk threshold for a given use class.

Risk scoring may combine fairness gaps, safety incidents, performance instability, privacy exposure, and business criticality.

27. Trade-Offs in Responsible AI

Responsible AI frequently involves trade-offs. Examples include:

- fairness vs raw predictive efficiency

- privacy vs data utility

- explainability vs model complexity

- safety restrictions vs user flexibility

- speed of deployment vs level of review

These trade-offs should be made explicit and documented rather than hidden behind vague claims of neutrality.

28. Common Failure Modes

- treating responsible AI as a late-stage audit only

- deploying high-risk systems without human override

- monitoring accuracy but not fairness or safety

- lack of documentation for training data and intended use

- weak ownership and no incident response plan

- optimizing for product speed while ignoring downstream harm

29. Strengths of Mature Responsible AI Practice

- reduces harm and operational risk

- improves trust and adoption

- supports compliance and governance readiness

- improves system resilience and observability

- makes deployment decisions more auditable and defensible

30. Limitations and Realities

- responsible AI cannot eliminate all uncertainty or disagreement

- many risks depend on context, not only on models

- some harms are hard to quantify cleanly

- organizational incentives can undermine responsible design if not aligned

- technical controls alone are insufficient without governance and culture

31. Best Practices

- Start with use-case legitimacy and risk classification before model development.

- Build fairness, privacy, safety, and transparency checks into the lifecycle rather than adding them at the end.

- Document data provenance, intended use, evaluation gaps, and deployment limits clearly.

- Use human oversight for high-stakes or high-uncertainty decisions.

- Red-team and stress-test systems under realistic and adversarial scenarios.

- Define ownership, monitoring, escalation, and rollback processes before launch.

- Continuously revisit responsible AI controls as the system, users, and environment evolve.

32. Conclusion

Responsible AI practices are essential because AI systems are not just predictive artifacts; they are operational decision systems embedded in real institutions and real lives. A responsible AI program must therefore combine technical quality with fairness, privacy, safety, transparency, accountability, and lifecycle governance.

The most important insight is that responsibility is systemic. It cannot be solved by a single fairness metric, a single explainability tool, or a single approval step. Responsible AI emerges from disciplined design choices across problem framing, data governance, model evaluation, deployment controls, and organizational accountability. When done well, these practices make AI systems not only more compliant and defensible, but genuinely more trustworthy and more useful.