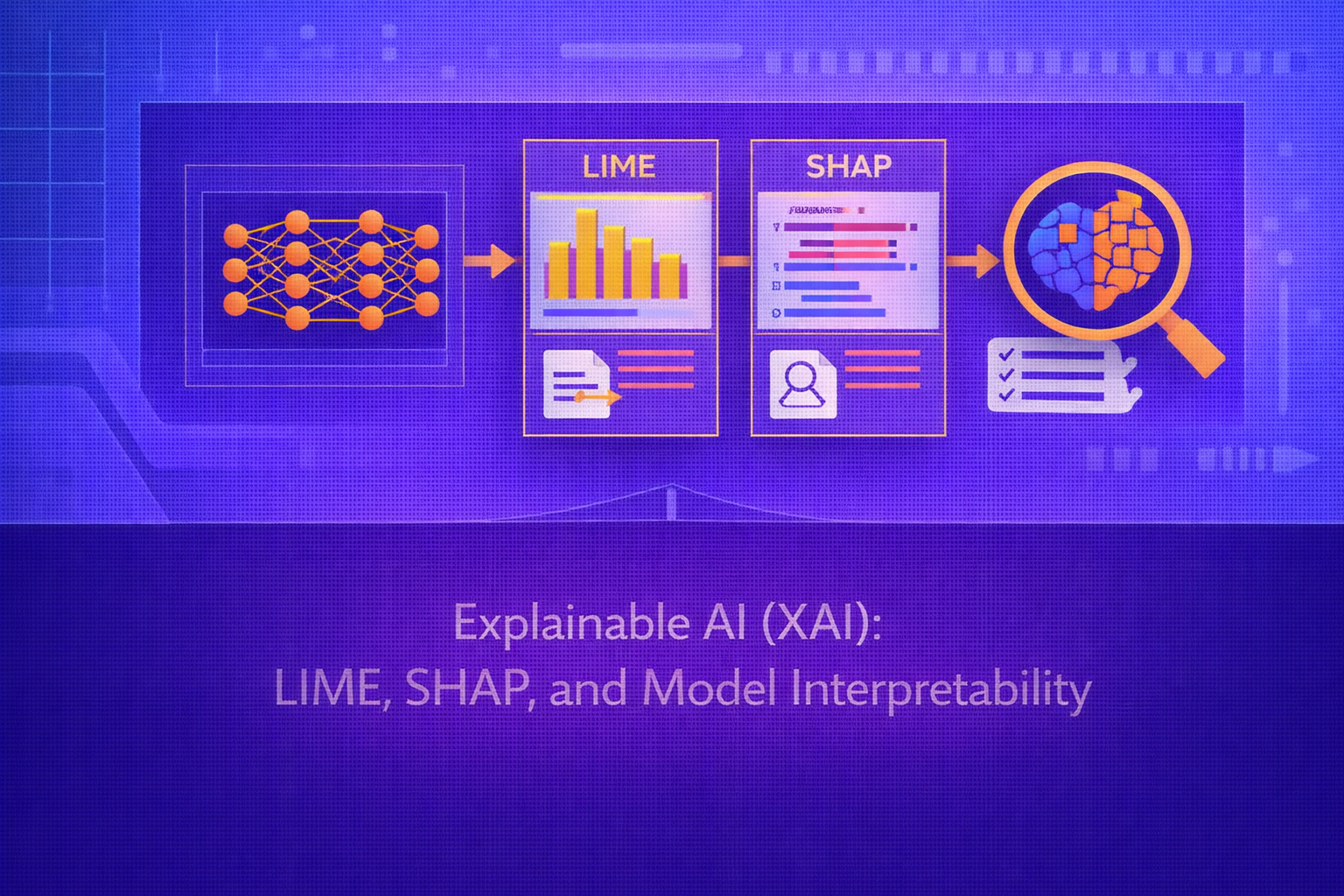

Explainable AI (XAI) is the field concerned with making machine learning models more understandable to humans. As predictive models become more complex, especially in high-stakes domains, there is growing need to explain why a model made a prediction, which features influenced the decision, how robust that decision is, and whether the model behaves fairly and reliably. This whitepaper presents a technical treatment of model interpretability with a special focus on LIME, SHAP, and the broader foundations of explainability.

Abstract

Model performance alone is often insufficient in real-world deployment. Decision-makers may need transparency for trust, auditing, debugging, compliance, fairness analysis, safety validation, and scientific understanding. Explainable AI addresses this through methods that either build inherently interpretable models or generate post hoc explanations for complex black-box systems. This paper explains the difference between interpretability and explainability, distinguishes global and local explanations, and examines two of the most influential post hoc explanation methods: LIME and SHAP. It also discusses feature importance, partial dependence, counterfactual reasoning, faithfulness, stability, limitations of explanation methods, and practical trade-offs. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Suppose a machine learning model defines a prediction function

f(x), where x ∈ ℝp is a feature vector.

In many applications, users want more than the output f(x). They also want to know:

- which features mattered most

- whether the decision was robust

- what would need to change for the prediction to change

- whether the model behaves consistently and fairly

XAI methods attempt to answer such questions, either by designing transparent models or by approximating and probing complex models after training.

2. What Is Model Interpretability?

Model interpretability refers to the extent to which a human can understand the internal logic or external behavior of a model. There is no single universally accepted definition, but interpretability usually involves at least one of the following:

- understanding how predictions depend on inputs

- understanding which patterns the model has learned

- being able to justify a prediction in human-meaningful terms

- being able to debug or contest model behavior

3. Intrinsic Interpretability vs Post Hoc Explainability

Interpretability methods are often divided into two categories.

3.1 Intrinsically Interpretable Models

These models are interpretable by design. Examples include:

- linear regression

- logistic regression

- small decision trees

- sparse rule lists

In such models, the decision mechanism is itself readable or structurally understandable.

3.2 Post Hoc Explainability

These methods explain a trained model after the fact, without changing its structure. This is especially important for black-box models such as gradient-boosted ensembles, deep neural networks, and large foundation models.

LIME and SHAP belong to this category.

4. Global vs Local Explanations

Another major distinction is between:

- Global interpretability: explaining overall model behavior across the dataset

- Local interpretability: explaining a specific prediction for one input

x

For example, a global explanation may say that feature x3 is generally

important across the entire dataset, while a local explanation may say that for one particular prediction,

feature x7 drove the output upward.

5. Why Explainability Matters

Explainability is important for several reasons:

- trust: users are more likely to rely on a model they can understand

- debugging: explanations can reveal spurious correlations or leakage

- compliance: some regulated settings require justification

- fairness: explanations can reveal disparate behavior across groups

- safety: high-stakes systems need transparent failure analysis

- scientific insight: models may uncover meaningful relationships in the data

6. The Challenge of Black-Box Models

Modern machine learning models often define highly nonlinear functions:

f : ℝp → ℝ

or

f : ℝp → [0,1]K.

Even when these models are accurate, their internal decision boundaries may be too complex for direct human inspection. Post hoc explanation methods therefore attempt to produce simpler, human-readable approximations of model behavior around specific inputs or across distributions.

7. Desiderata for Good Explanations

Good explanation methods are often evaluated along dimensions such as:

- faithfulness: the explanation should reflect actual model behavior

- stability: similar inputs should yield similar explanations when appropriate

- consistency: explanation values should behave logically as model reliance changes

- human interpretability: the explanation should be understandable to its audience

- computational tractability: it should be feasible to compute

8. Feature Attribution

A central idea in XAI is feature attribution: assign each feature a contribution score to the prediction. Given a

model output f(x), one seeks attribution values

φ1, φ2, ..., φp such that each

φj reflects the contribution of feature

xj.

Different explanation methods define these contributions differently.

9. Interpretable Surrogate Models

One major post hoc strategy is to approximate the black-box model locally with a simpler interpretable model

g(x), where g may be linear or sparse. The hope is that

g is easy to understand and close to f near the point of

interest.

LIME is based on exactly this idea.

10. LIME: Local Interpretable Model-Agnostic Explanations

LIME explains a single prediction by fitting a simple surrogate model around the instance being explained. Let

x be the instance of interest and f the black-box model.

LIME generates perturbed samples around x, evaluates

f on those samples, weights them by proximity to x, and

fits an interpretable model g.

10.1 LIME Objective

A standard conceptual LIME objective is:

ξ(x) = argming ∈ G L(f, g, πx) + Ω(g),

where:

Gis the family of interpretable modelsL(f, g, πx)measures local fidelity ofgtofπxis a locality weighting aroundxΩ(g)penalizes complexity of the explanation model

10.2 Local Weighting

Nearby perturbed points are given more importance than distant ones. A typical kernel weighting is:

πx(z) = exp(-D(x,z)2 / σ2),

where D(x,z) is a distance measure and σ controls locality.

10.3 Interpretable Representation

LIME often operates on an interpretable binary representation

z' ∈ {0,1}m, where the components indicate presence or absence of

interpretable features. In text, this may correspond to words being present or removed. In images, it may correspond

to superpixels being present or masked.

10.4 LIME as Sparse Linear Approximation

In many implementations, the explanation model is a sparse linear model:

g(z') = w0 + Σj=1m wj z'j.

The coefficients wj are then interpreted as local feature contributions.

10.5 Strengths of LIME

- model-agnostic

- local and intuitive

- works across text, tabular, and image domains

- can produce sparse human-readable explanations

10.6 Limitations of LIME

- sensitive to sampling procedure

- sensitive to locality kernel choice

- local linear approximation may be unstable

- different runs may yield different explanations

- fidelity may be weak if the model is highly nonlinear even locally

11. SHAP: SHapley Additive exPlanations

SHAP is a framework for additive feature attribution grounded in cooperative game theory. It uses Shapley values, originally developed to allocate total payoff fairly among players in a coalition game.

In SHAP, the “players” are features, and the “payoff” is the model output relative to some baseline expectation.

11.1 Additive Explanation Form

SHAP explanations often take the form:

g(z') = φ0 + Σj=1M φj z'j,

where:

φ0is the baseline valueφjis the contribution of featurejz'indicates feature presence in coalition form

11.2 Shapley Value Definition

The Shapley value for feature j is:

φj = ΣS ⊆ F \ {j} [ |S|!(M-|S|-1)! / M! ] · [v(S ∪ {j}) - v(S)],

where:

Fis the full set of featuresM = |F|Sis a subset of features not containingjv(S)is the model value when only features in coalitionSare known

This formula averages the marginal contribution of a feature across all possible feature orderings.

11.3 SHAP Efficiency Property

A major property of Shapley-based explanations is additive completeness:

f(x) = φ0 + Σj=1M φj

in the explanation space.

This means the feature attributions sum exactly to the prediction difference relative to baseline.

11.4 Why SHAP Is Popular

SHAP became highly influential because it combines:

- a clear game-theoretic foundation

- local additive explanations

- consistency properties

- specialized efficient algorithms for certain model families

12. SHAP Value Function Choices

A subtle issue in SHAP is how to define v(S), the value of a coalition of features.

One common approach is to use conditional or marginal expectations of the model output when only some features are

observed. For example:

v(S) = E[f(X) | XS = xS]

or a related approximation.

This choice matters because feature dependence can strongly affect attribution meaning.

13. Kernel SHAP

Kernel SHAP is a model-agnostic approximation method for SHAP values. It uses weighted linear regression over coalition samples, with a specially derived weighting kernel inspired by the Shapley formula. It is flexible but can be computationally expensive.

14. Tree SHAP

Tree SHAP is a specialized exact or efficient algorithm for tree-based models such as random forests and gradient boosting machines. It dramatically improves tractability compared with brute-force Shapley computation and is one reason SHAP is especially popular in tabular ML workflows.

15. Deep SHAP and Model-Specific Variants

Extensions of SHAP also exist for deep networks and other model families, often combining approximate backpropagation rules with Shapley-inspired decompositions. These variants trade exactness for tractability in high-dimensional settings.

16. LIME vs SHAP

LIME and SHAP are both local post hoc explanation frameworks, but they differ fundamentally.

16.1 Conceptual Difference

LIME fits a local surrogate model around the instance of interest. SHAP attributes prediction contributions according to Shapley values over feature coalitions.

16.2 Stability

SHAP is often regarded as more principled and more consistent because of its game-theoretic foundation, though in practice it still depends on approximation choices and background distributions. LIME can be more sensitive to local sampling and perturbation design.

16.3 Computational Cost

Exact Shapley computation is combinatorial and expensive. Specialized algorithms such as Tree SHAP help, but model- agnostic SHAP can still be costly. LIME is often simpler and faster to apply, though explanation quality may be less stable.

17. Global Feature Importance

Beyond local explanations, practitioners often want global importance measures. A simple global SHAP summary may

average absolute local contributions:

Importance(j) = (1/n) Σi=1n |φij|.

This indicates how strongly feature j influences predictions on average across the

dataset.

18. Partial Dependence Plots

A partial dependence plot (PDP) shows the average effect of one feature on the model output while averaging over

others. For feature subset S, the partial dependence function is:

PDS(xS) = EXC[f(xS, XC)],

where C is the complement of S.

PDPs are useful for global interpretation, though they can be misleading when features are strongly correlated.

19. ICE Plots

Individual Conditional Expectation (ICE) plots show how the prediction changes for individual samples as one feature varies. These provide more granular insight than PDPs and can reveal heterogeneous feature effects.

20. Counterfactual Explanations

Another important XAI approach is counterfactual explanation. A counterfactual seeks an alternative input

x' close to x such that the prediction changes to a desired

outcome:

f(x') = y'.

A typical optimization form is:

minx' d(x, x') + λ · L(f(x'), y'),

where d measures proximity and L encourages the desired

output.

Counterfactuals are especially useful when users want actionable recourse rather than descriptive attribution.

21. Saliency and Gradient-Based Explanations

For differentiable models, explanations may be based on gradients:

∂f(x) / ∂xj.

These indicate how sensitive the prediction is to infinitesimal changes in the input features. Gradient-based methods are especially common in deep learning for images, text, and multimodal systems, though raw gradients can be noisy and hard to interpret.

22. Faithfulness vs Plausibility

A key issue in XAI is that human-plausible explanations are not always faithful to model internals. An explanation can sound convincing while failing to reflect actual decision logic. Therefore, explanation methods must be evaluated not only for readability but also for fidelity to model behavior.

23. Stability and Sensitivity

Explanation methods can behave unstably. Small perturbations in input, sampling randomness, background data choice, or feature encoding can cause explanation values to change. This is especially problematic when explanations are used for auditing, trust, or legal accountability.

24. Correlated Features and Attribution Ambiguity

When features are strongly correlated, attributing prediction influence becomes difficult. If two variables carry overlapping information, many explanation methods struggle to decide how much credit to assign to each individually. This affects both LIME and SHAP, though SHAP’s coalition-based formulation makes the issue more explicit.

25. Explanation for Different Audiences

Not all stakeholders need the same type of explanation:

- data scientists may want debugging-oriented explanations

- business users may want summary importance and recourse

- regulators may require traceable and stable justification

- end users may need plain-language explanation of a specific decision

Therefore, explanation design is partly a human-centered communication problem, not just a mathematical one.

26. Evaluation of Explanations

Explanations can be evaluated through:

- faithfulness tests

- stability analysis

- human usefulness studies

- sanity checks under model randomization

- feature removal or insertion tests

No single metric fully captures explanation quality, which is part of why XAI remains an active research field.

27. Practical Applications of XAI

Explainability is important in:

- credit risk and loan approval

- healthcare and clinical decision support

- fraud detection

- insurance and underwriting

- legal and public-sector AI

- model debugging and ML operations

- scientific discovery workflows

28. Strengths of XAI Methods

- help diagnose spurious model behavior

- support local decision transparency

- improve trust and stakeholder communication

- enable fairness and governance auditing

- provide a bridge between black-box models and human reasoning

29. Limitations of XAI

- explanations may be approximate rather than exact

- different methods can disagree on the same prediction

- human-friendly explanations may not be fully faithful

- correlated features complicate attribution

- post hoc explanations do not make a black-box model intrinsically transparent

30. Best Practices

- Use global and local explanations together rather than relying on only one view.

- Choose explanation methods based on model type, audience, and decision stakes.

- Validate explanation stability under perturbations and reruns.

- Use model-specific methods such as Tree SHAP when appropriate for efficiency and fidelity.

- Do not treat explanations as proof of fairness or causal truth without deeper analysis.

- Pair XAI with governance, calibration, robustness testing, and domain review.

31. Conclusion

Explainable AI is essential because predictive accuracy alone is not enough for many real-world systems. As machine learning models become more complex, stakeholders increasingly need tools that reveal what models are doing, why they are doing it, and how reliable those behaviors are. LIME offers a flexible local surrogate-based explanation method, while SHAP provides a principled additive attribution framework grounded in Shapley values.

Understanding XAI means understanding both the mathematics of attribution and the limitations of explanation itself. Explanations are not neutral artifacts; they depend on assumptions, approximation choices, perturbation strategies, and the needs of the intended audience. Used carefully, XAI helps make machine learning systems more transparent, testable, auditable, and usable. Used carelessly, it can create false confidence. The field therefore sits at the intersection of machine learning, statistics, human factors, and responsible AI.