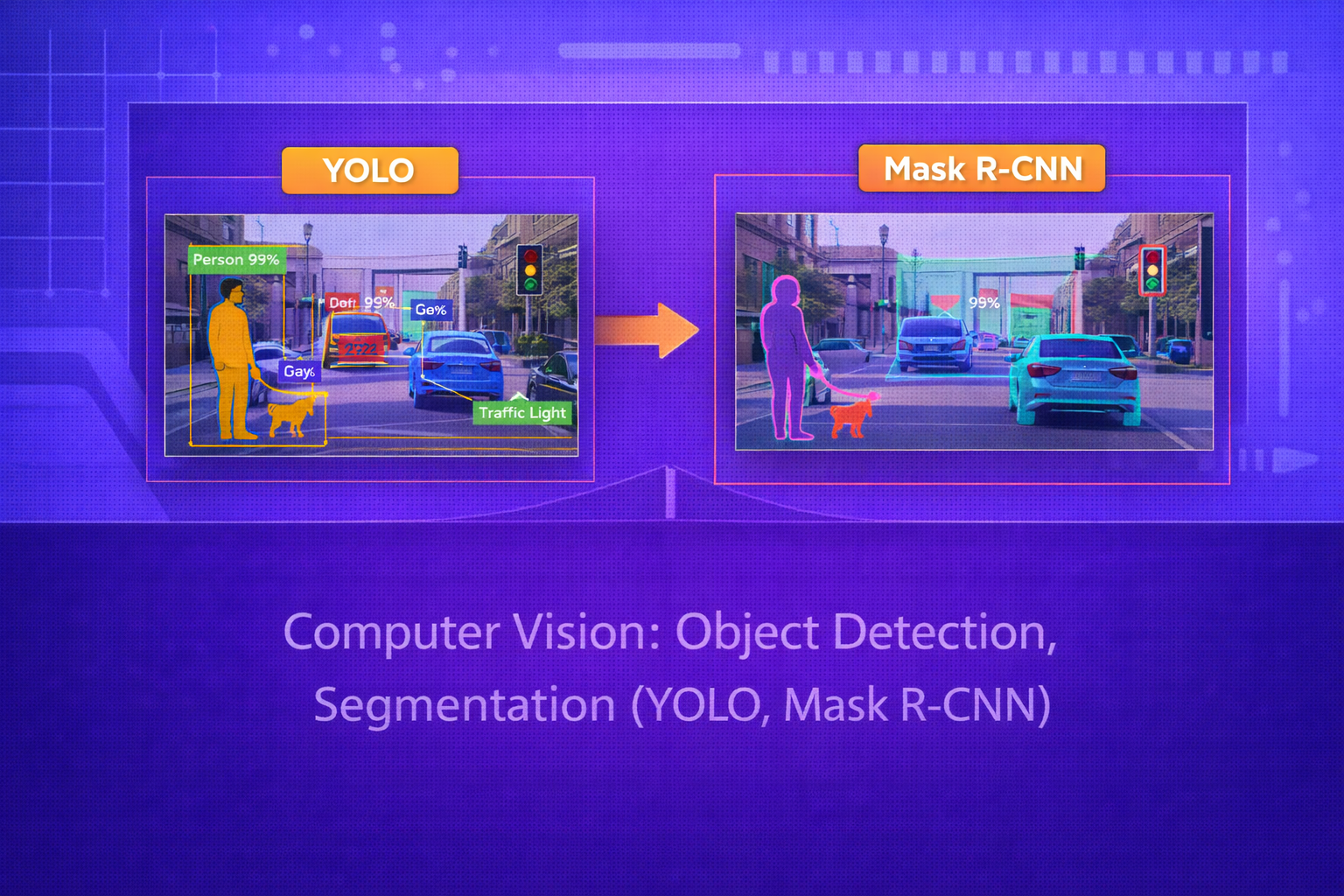

Object detection and segmentation are core computer vision tasks that move beyond simple image classification by asking not only what is in an image, but where it is and, in segmentation, which pixels belong to each object or class. This whitepaper provides a technical treatment of modern object detection and segmentation, with special emphasis on YOLO and Mask R-CNN as two influential paradigms.

Abstract

Image classification assigns a single label or set of labels to an entire image, but many real-world vision systems require more detailed understanding. Object detection identifies object categories and localizes them using bounding boxes. Segmentation provides finer-grained spatial understanding by assigning labels to pixels, either at the class level or per instance. This paper explains the foundations of detection and segmentation, including bounding box representations, Intersection over Union (IoU), anchor-based and anchor-free detection concepts, one-stage and two-stage detectors, region proposals, non-maximum suppression, and the architecture of YOLO and Mask R-CNN. It also covers segmentation losses, mask heads, training objectives, evaluation metrics such as mAP and mask AP, and practical trade-offs between speed and accuracy. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Suppose an image is represented as

I ∈ ℝH×W×C,

where H is height, W is width, and

C is the number of channels.

In object detection, the goal is to output a set of predictions:

{(bi, ci, si)}i=1N,

where:

biis a bounding boxciis a class labelsiis a confidence score

In segmentation, the output is more detailed and includes either a class label per pixel or a binary mask per object instance.

2. Why Detection and Segmentation Matter

Detection and segmentation are essential because many vision applications require spatial understanding. A self-driving system must know where pedestrians, vehicles, and road signs are. A medical imaging system must isolate lesions or organs. A retail analytics system must count and localize products on shelves.

These tasks are therefore central to applied computer vision in robotics, healthcare, surveillance, manufacturing, remote sensing, retail, and autonomous systems.

3. Object Detection Basics

Object detection combines two problems:

- classification: determine what object category is present

- localization: determine where the object is in the image

A bounding box may be parameterized in several ways. A common form is:

b = (x, y, w, h),

where (x,y) may denote center coordinates and

w, h denote width and height.

Another common form uses corner coordinates:

b = (xmin, ymin, xmax, ymax).

4. Intersection over Union (IoU)

A key measure in detection and segmentation is Intersection over Union. For predicted region

Bpred and ground-truth region

Btrue, IoU is:

IoU = Area(Bpred ∩ Btrue) / Area(Bpred ∪ Btrue).

IoU measures how well the predicted region overlaps the true region. It is used for:

- assigning training targets

- filtering duplicate detections

- evaluating model accuracy

5. Detection as Structured Prediction

Unlike classification, object detection produces a variable-size set of outputs. There may be zero, one, or many objects, potentially of different classes and scales. The model must therefore solve:

- how many objects are present

- where each object is

- what class each object belongs to

- which predictions correspond to the same object

6. One-Stage vs Two-Stage Detectors

Modern detectors are often grouped into two broad categories:

6.1 One-Stage Detectors

One-stage detectors predict bounding boxes and class scores directly from dense image features in a single pass. Examples include YOLO and SSD. They are generally faster and suitable for real-time applications.

6.2 Two-Stage Detectors

Two-stage detectors first generate region proposals and then classify/refine them in a second stage. Examples include Faster R-CNN and Mask R-CNN. These methods often achieve higher accuracy, especially for small objects and instance segmentation, at the cost of more complexity and computation.

7. Anchor Boxes

Many detectors use anchor boxes, which are predefined bounding box templates with various scales and aspect ratios. At each spatial location, the detector predicts:

- how likely each anchor contains an object

- how the anchor should be adjusted to better match the object

- the class probabilities

A box regression target often predicts offsets from an anchor

a = (xa, ya, wa, ha)

to a ground-truth box

g = (x, y, w, h).

A common parameterization is:

tx = (x - xa) / wa,

ty = (y - ya) / ha,

tw = log(w / wa),

and

th = log(h / ha).

8. Classification and Localization Losses

Detection models usually optimize a combined loss:

L = Lcls + λ Lloc,

where:

Lclsis the classification lossLlocis the localization or regression lossλbalances the two terms

Classification often uses cross-entropy or focal loss. Localization often uses Smooth L1 loss or IoU-based losses.

8.1 Smooth L1 Loss

A common box regression loss is Smooth L1:

smoothL1(x) = 0.5x2 if |x| < 1, and |x| - 0.5 otherwise.

This is less sensitive to outliers than pure L2 loss and often stabilizes training.

9. Non-Maximum Suppression (NMS)

Dense detectors usually produce many overlapping candidate boxes. Non-Maximum Suppression removes redundant predictions. The idea is:

- select the highest-confidence box

- remove boxes with IoU above a threshold relative to it

- repeat for remaining boxes

This helps ensure that one object is not reported many times.

10. YOLO: You Only Look Once

YOLO is one of the most influential one-stage detection families. The key idea is to treat detection as a single unified regression and classification problem over the whole image. Rather than using separate proposal generation and classification stages, YOLO predicts bounding boxes and class confidences directly from image features in one pass.

10.1 Core Philosophy of YOLO

YOLO divides the image into spatial regions and predicts boxes and class scores directly at dense locations. This makes inference extremely fast and suitable for real-time deployment.

10.2 Grid-Based Prediction Idea

In early YOLO formulations, the image was divided into an

S × S grid. Each cell predicted:

- bounding box coordinates

- an objectness score

- class probabilities

Although modern YOLO variants are more sophisticated, the basic philosophy remains dense direct prediction over multi-scale features.

10.3 Objectness in YOLO

YOLO predicts an objectness confidence that reflects whether an object exists in the box and how well the box matches

it. Conceptually, objectness is related to:

P(object) × IoU(pred, truth).

10.4 YOLO Loss Structure

YOLO-style training generally combines:

- box regression loss

- objectness loss

- classification loss

A simplified conceptual objective is:

L = Lbox + Lobj + Lcls.

10.5 Multi-Scale Detection

Later YOLO versions use multi-scale feature pyramids so that objects at different sizes can be detected more effectively. This is especially important because small objects may disappear in coarse feature maps if only one scale is used.

10.6 Strengths of YOLO

- very fast inference

- end-to-end dense prediction

- well suited for real-time applications

- strong balance of speed and accuracy in modern variants

10.7 Limitations of YOLO

- can struggle more with very small objects than some two-stage methods

- dense prediction design may be harder to optimize for some edge cases

- instance segmentation is not native in standard detection-only YOLO variants unless extended

11. Region Proposal Based Detection

Two-stage detectors first identify promising candidate object regions and then classify/refine them. This pipeline became highly successful because it decomposes the task into:

- proposal generation

- region-wise classification and box refinement

Faster R-CNN introduced the Region Proposal Network (RPN) for efficient end-to-end proposal generation.

12. Region Proposal Network (RPN)

An RPN slides over backbone feature maps and predicts, for anchor boxes at each location:

- objectness scores

- bounding box offsets

The RPN loss is typically:

LRPN = Lcls + λ Lreg.

High-scoring proposals are then passed to later detection stages.

13. ROI Pooling and ROI Align

Once candidate regions are proposed, the detector must extract fixed-size features for each region from the backbone feature map.

13.1 ROI Pooling

ROI Pooling divides a proposed region into bins and pools features from each bin. However, quantization in ROI Pooling can misalign features, which is especially harmful for fine-grained mask prediction.

13.2 ROI Align

Mask R-CNN introduced ROI Align to avoid quantization misalignment by using interpolation at precise floating-point coordinates. This significantly improves mask quality and localization accuracy.

14. Segmentation Basics

Segmentation assigns labels at the pixel level. There are two major forms:

14.1 Semantic Segmentation

Each pixel receives a class label:

y(x,y) ∈ {1, ..., K}.

Different instances of the same class are not separated.

14.2 Instance Segmentation

Each object instance is segmented separately. For example, three people in an image will have three separate masks, even though they share the same semantic class.

15. Mask R-CNN

Mask R-CNN extends Faster R-CNN by adding a third output branch for object masks. It performs:

- region proposal generation

- box classification

- box refinement

- pixel-level mask prediction for each detected instance

This makes it a landmark architecture for instance segmentation.

15.1 Architecture of Mask R-CNN

Mask R-CNN consists of:

- a backbone network for feature extraction

- a region proposal network

- ROI Align for feature extraction from proposals

- a classification and box regression head

- a mask head that predicts a binary mask for each class and region

15.2 Mask Head

The mask branch predicts a low-resolution binary mask for each class candidate. For each ROI, the network produces a

mask tensor, often of size such as m × m for each class.

The appropriate class-specific mask is selected during training or inference depending on the detected class.

15.3 Mask Loss

The mask loss is usually an average binary cross-entropy over mask pixels:

Lmask = - Σu,v [yuv log \hat{y}uv + (1-yuv) log(1-\hat{y}uv)].

This is applied only to the ground-truth class mask for each positive ROI.

15.4 Total Mask R-CNN Loss

Mask R-CNN combines:

L = Lcls + Lbox + Lmask.

This unified multi-task objective enables simultaneous detection and instance segmentation.

15.5 Why Mask R-CNN Was Important

Mask R-CNN showed that adding a lightweight mask branch on top of a strong detection framework could achieve excellent instance segmentation performance. ROI Align was especially crucial because precise spatial alignment matters much more for masks than for coarse classification.

16. Semantic vs Instance Segmentation in Practice

Semantic segmentation is often used when the goal is scene labeling, such as road, sky, building, vegetation, or organ tissue classes. Instance segmentation is used when separate object identities matter, such as counting products, people, or vehicles.

17. Evaluation Metrics for Detection

17.1 Precision and Recall

Detection uses precision-recall behavior rather than only raw accuracy, because multiple predictions and confidence thresholds are involved.

Precision is:

Precision = TP / (TP + FP)

and recall is:

Recall = TP / (TP + FN).

17.2 Average Precision (AP)

Average Precision summarizes the precision-recall curve for one class. Mean Average Precision (mAP) averages AP over classes and often over IoU thresholds.

In modern benchmarks such as COCO, AP is evaluated across multiple IoU thresholds, for example from

0.50 to 0.95.

18. Evaluation Metrics for Segmentation

18.1 IoU for Masks

For segmentation masks, IoU is computed over predicted and true pixel sets:

IoU = |Mpred ∩ Mtrue| / |Mpred ∪ Mtrue|.

18.2 Dice Score

Another common segmentation metric is Dice:

Dice = 2|Mpred ∩ Mtrue| / (|Mpred| + |Mtrue|).

18.3 Mask AP

Instance segmentation benchmarks often report mask Average Precision, analogous to detection AP but based on mask overlap instead of bounding boxes alone.

19. Speed vs Accuracy Trade-Off

YOLO and Mask R-CNN represent different points on the speed-accuracy spectrum.

- YOLO: optimized for speed and real-time deployment

- Mask R-CNN: optimized for richer outputs and strong instance segmentation accuracy

The choice between them depends on application requirements such as latency, compute budget, and spatial precision.

20. Small Objects and Multi-Scale Features

Small objects are difficult because they occupy few pixels and may vanish in deep coarse feature maps. Modern detectors often use Feature Pyramid Networks (FPNs) or multi-scale heads so that both fine and coarse information are available at prediction time.

21. Class Imbalance in Detection

In dense detection, most locations or anchors correspond to background rather than objects. This creates severe class imbalance. Techniques such as hard negative mining and focal loss are used to reduce domination by easy background examples.

21.1 Focal Loss

A common focal loss form for binary classification is:

FL(pt) = - α (1 - pt)γ log(pt).

This downweights easy examples and focuses training on harder cases.

22. Data Augmentation and Detection Training

Detection and segmentation models often use augmentation such as:

- random scaling

- cropping

- flipping

- color jitter

- mosaic or mixup-like strategies in some YOLO variants

These help improve robustness to viewpoint, scale, and scene variation.

23. Practical Applications

Object detection and segmentation are used in:

- autonomous driving

- video surveillance

- medical imaging

- retail shelf analytics

- agriculture and crop monitoring

- industrial inspection

- robotic manipulation

- remote sensing

24. Strengths of YOLO

- fast end-to-end inference

- strong real-time performance

- practical deployment on edge or video systems

- good speed-accuracy balance in modern versions

25. Strengths of Mask R-CNN

- high-quality instance segmentation

- accurate object localization

- modular two-stage design

- excellent for applications needing pixel-level object separation

26. Limitations

- YOLO may trade some fine localization or small-object accuracy for speed

- Mask R-CNN is slower and more computationally expensive

- segmentation annotation is costly to obtain

- dense scenes and heavy occlusion remain difficult

- domain shift can degrade performance strongly

27. Best Practices

- Use YOLO when real-time speed is critical.

- Use Mask R-CNN when instance masks and high spatial precision matter.

- Monitor both mAP and latency when evaluating deployment choices.

- Use multi-scale features for small-object performance.

- Validate IoU thresholds and NMS behavior carefully in crowded scenes.

- Use task-appropriate augmentation and annotation quality control.

28. Conclusion

Object detection and segmentation are central tasks in modern computer vision because they enable models not only to recognize what is present in an image, but to localize and delineate it precisely. Detection provides structured outputs through bounding boxes, while segmentation adds pixel-level spatial understanding. These capabilities are essential in domains where position, count, and shape matter.

YOLO and Mask R-CNN represent two highly influential design philosophies. YOLO emphasizes unified, fast, one-stage prediction suitable for real-time systems. Mask R-CNN extends two-stage detection into rich instance segmentation with strong accuracy and spatial fidelity. Understanding both architectures provides a strong foundation for modern vision systems involving recognition, localization, and pixel-level scene understanding.