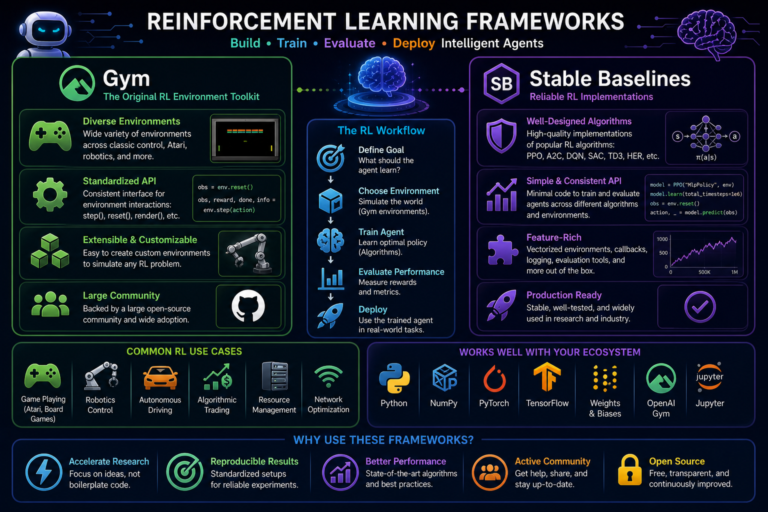

Reinforcement Learning (RL) is a machine learning paradigm in which an agent learns through interaction with an environment. Instead of being told the correct output for each input, the agent receives scalar reward signals and must discover which actions lead to the highest long-term return. This whitepaper presents a detailed technical introduction to RL, focusing on its foundational concepts: agents, environments, rewards, policies, value functions, trajectories, exploration, and the Markov Decision Process framework.

Abstract

Reinforcement Learning differs from supervised and unsupervised learning in that decision-making unfolds over time and actions affect future observations and rewards. The central goal is to learn a policy that maximizes expected cumulative reward. This paper explains the formal RL problem, including states, actions, transitions, rewards, episodes, return, discounting, policies, value functions, and the exploration-exploitation trade-off. It introduces the Markov Decision Process (MDP) as the standard mathematical model and discusses how agents evaluate and improve behavior. The paper also explains why reward design is critical and why long-term credit assignment makes RL both powerful and difficult. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

In supervised learning, a model learns from labeled examples of the form

(x, y). In reinforcement learning, there is no direct label telling the learner the best

action in each situation. Instead, an agent interacts with an environment, observes the consequences of its actions,

and receives rewards that indicate how desirable those consequences are.

The challenge is not merely to obtain immediate reward, but to maximize long-term reward over time. This makes RL a framework for sequential decision-making under uncertainty.

2. Core Components of Reinforcement Learning

The standard RL setup contains several core elements:

- Agent: the learner or decision-maker

- Environment: the external system the agent interacts with

- State: a representation of the current situation

- Action: a choice made by the agent

- Reward: a scalar feedback signal

- Policy: a strategy for selecting actions

At each timestep t, the agent observes state

st, selects action

at, receives reward

rt+1, and transitions to a new state

st+1.

3. Agent and Environment Interaction Loop

The interaction loop can be written as:

st → at → rt+1, st+1.

This continues over many timesteps, potentially until an episode ends or indefinitely in continuing tasks. The agent improves by using past experience to choose better future actions.

4. States

A state s ∈ 𝒮 is the information available to the agent that is relevant for

decision-making. In simple environments, the state may be fully observable and explicitly given. In more complex

environments, the observation may be partial, noisy, or incomplete.

Good state representations are crucial. If essential information is missing from the state, the agent may not be able to choose optimally.

5. Actions

At each state, the agent chooses an action a ∈ 𝒜(s), where

𝒜(s) is the set of available actions in state s.

Actions may be:

- discrete, such as move left or move right

- continuous, such as steering angle or motor torque

- structured, such as selecting multiple components jointly

6. Rewards

The reward is a scalar signal indicating how good or bad the immediate outcome of an action is. Formally, after

taking action at in state st, the

agent receives reward rt+1.

The reward is not the same as the final objective description in natural language. It is the formal numeric signal that the learning algorithm actually optimizes against.

6.1 Immediate vs Long-Term Reward

A key feature of RL is that a good immediate reward does not always lead to the best long-term outcome. The agent must learn to choose actions that maximize cumulative future reward, even when short-term sacrifices are required.

6.2 Reward Design

Reward design is critical. If the reward function poorly reflects the true objective, the agent may optimize the wrong behavior. This is often called reward misspecification or reward hacking.

7. Policies

A policy defines how the agent selects actions. It is usually written as

π(a|s), the probability of choosing action a in state

s.

7.1 Deterministic Policies

A deterministic policy maps each state to a single action:

a = π(s).

7.2 Stochastic Policies

A stochastic policy defines a distribution over actions:

π(a|s) = P(At = a | St = s).

Stochastic policies are useful when exploration is required or when the environment is uncertain or partially observable.

8. Trajectories and Episodes

A trajectory is a sequence of states, actions, and rewards:

τ = (s0, a0, r1, s1, a1, r2, ...).

In episodic tasks, interaction is divided into episodes that terminate after some condition is met. Examples include finishing a game, reaching a goal, or failure in a control task. In continuing tasks, there may be no natural end.

9. Return

The fundamental quantity RL tries to maximize is the return, which is the cumulative reward from a given timestep

onward. The undiscounted return from time t is:

Gt = rt+1 + rt+2 + rt+3 + ....

In practice, discounted return is more common:

Gt = Σk=0∞ γk rt+k+1,

where γ ∈ [0,1] is the discount factor.

9.1 Meaning of the Discount Factor

The discount factor γ determines how much future rewards matter relative to immediate

rewards. If γ = 0, the agent is purely myopic and cares only about immediate reward.

If γ is close to 1, the agent values long-term outcomes more strongly.

10. Objective of Reinforcement Learning

The objective is to find a policy π that maximizes expected return:

π* = argmaxπ E[Gt | π].

This expectation is taken over the stochasticity of both the environment and the policy, if the policy is stochastic.

11. Markov Decision Process (MDP)

The standard mathematical framework for RL is the Markov Decision Process, defined by the tuple:

(𝒮, 𝒜, P, R, γ).

𝒮: set of states𝒜: set of actionsP(s'|s,a): transition dynamicsR(s,a,s')orE[r|s,a,s']: reward functionγ: discount factor

11.1 Markov Property

The Markov property says that the future depends only on the current state and action, not on the full past history:

P(st+1 | st, at, st-1, at-1, ...) = P(st+1 | st, at).

If this property holds, the current state contains all relevant information for future decision-making.

12. Policy Value Functions

To evaluate how good a policy is, RL defines value functions.

12.1 State-Value Function

The state-value function under policy π is:

Vπ(s) = Eπ[Gt | St = s].

It measures the expected return from state s when following policy

π.

12.2 Action-Value Function

The action-value function under policy π is:

Qπ(s,a) = Eπ[Gt | St = s, At = a].

It measures the expected return of taking action a in state

s and then following policy π.

13. Bellman Expectation Equations

The value functions satisfy recursive equations.

For the state-value function:

Vπ(s) = Σa π(a|s) Σs',r P(s',r|s,a) [r + γVπ(s')].

For the action-value function:

Qπ(s,a) = Σs',r P(s',r|s,a) [r + γ Σa' π(a'|s') Qπ(s',a')].

These Bellman equations are central because they express long-term value recursively in terms of immediate reward and future value.

14. Optimal Value Functions

The optimal state-value function is:

V*(s) = maxπ Vπ(s).

The optimal action-value function is:

Q*(s,a) = maxπ Qπ(s,a).

These satisfy the Bellman optimality equations:

V*(s) = maxa Σs',r P(s',r|s,a) [r + γV*(s')]

and

Q*(s,a) = Σs',r P(s',r|s,a) [r + γ maxa' Q*(s',a')].

15. Policy Improvement

If the agent knows Qπ(s,a), then a better policy can be obtained by choosing

actions greedily:

π'(s) = argmaxa Qπ(s,a).

This principle underlies many RL algorithms: estimate values, then improve the policy using those values.

16. Exploration vs Exploitation

One of the defining tensions in RL is the exploration-exploitation trade-off.

- Exploitation: choose the action currently believed to be best

- Exploration: try other actions to gather information that might lead to higher long-term reward

If the agent exploits too early, it may get stuck with a suboptimal policy. If it explores too much, it may fail to capitalize on what it has already learned.

16.1 Epsilon-Greedy Example

A simple exploration strategy is epsilon-greedy:

- with probability

1 - ε, choose the greedy action - with probability

ε, choose a random action

This balances exploration and exploitation in a simple but effective way.

17. Model-Based vs Model-Free RL

RL methods can be grouped broadly into:

- Model-based: the agent uses or learns transition and reward models

P(s'|s,a)andR - Model-free: the agent learns directly from experience without explicitly modeling the environment

This whitepaper focuses on basics rather than detailed algorithms, but this distinction is foundational.

18. Reward Shaping and Credit Assignment

In RL, rewards may be delayed. An action taken now may affect outcomes much later. This creates the credit assignment problem: how should the system assign responsibility to earlier actions for later rewards?

Reward shaping can help by providing intermediate feedback, but if done poorly, it may alter the behavior being optimized in unintended ways.

19. Sparse Rewards

Many real-world RL tasks involve sparse rewards, where meaningful feedback is rare. For example, an agent may receive reward only when it reaches a goal. Sparse reward problems are hard because the agent may need long exploratory sequences before receiving any learning signal.

20. Episodic vs Continuing Tasks

In episodic tasks, the environment eventually reaches a terminal state, and the return is naturally finite. In

continuing tasks, there may be no terminal state, so discounting with

γ < 1 helps ensure that the return remains bounded:

Gt = Σk=0∞ γk rt+k+1.

21. Stochastic Environments

RL often operates in stochastic environments, where the same action in the same state may produce different next states or rewards. This means the agent must reason in expectation rather than assuming deterministic control.

22. Partial Observability

In some settings, the agent does not observe the full state, only an observation

ot. This leads to partially observable problems, often modeled as POMDPs.

In such cases, memory or belief-state reasoning may be required.

23. Example Intuition: Game Playing

Consider an agent playing a game. A move may not produce immediate reward, but it may position the agent better for future gains. RL is suitable here because it learns strategies that optimize cumulative reward over the whole game, not just immediate payoffs.

24. Example Intuition: Robotics

In robotics, an action such as applying torque changes the robot’s future state. RL is useful because the agent must learn sequences of control decisions that maximize long-term performance, such as balance, speed, accuracy, or energy efficiency.

25. Example Intuition: Recommender Systems

In interactive recommendation, the system’s action affects what users see, which in turn affects future user behavior. RL can model such feedback loops better than one-step prediction methods because it accounts for delayed consequences of recommendations.

26. Challenges in Reinforcement Learning

- delayed rewards

- exploration difficulty

- sparse feedback

- nonstationary data distribution caused by the agent’s own evolving policy

- credit assignment across long action sequences

- sample inefficiency in many environments

27. RL Compared with Supervised Learning

In supervised learning, the correct target is given directly. In RL, the correct action is not given; the agent must infer useful behavior from reward signals. In supervised learning, data is often assumed fixed. In RL, the agent’s policy affects the data distribution it experiences.

28. RL Compared with Bandits

Multi-armed bandits are a simplified form of sequential decision-making where actions yield rewards but do not affect future states. RL is more general because actions change the future state distribution, making long-term planning essential.

29. Good Policies and Optimal Policies

A policy is good if it yields high expected return. A policy is optimal if no other policy has higher expected return from any state of interest. In practice, many algorithms aim not for exact optimality, which may be computationally infeasible, but for sufficiently strong approximate policies.

30. Practical Foundations for Advanced RL

Almost all advanced RL methods build on the core concepts introduced here:

- agents and environments

- state, action, and reward signals

- policies as behavior strategies

- value functions as evaluators of future return

- Bellman recursions as the backbone of sequential reasoning

- exploration and exploitation balance

31. Best Practices for Conceptual Understanding

- Think in terms of long-term return, not just immediate reward.

- Always ask whether the current state representation contains enough information for decision-making.

- Treat reward design as part of the problem definition, not just an implementation detail.

- Distinguish clearly between the policy, the reward signal, and the value functions.

- Remember that exploration is necessary because the best action is initially unknown.

32. Conclusion

Reinforcement Learning provides a formal framework for learning through interaction. Its defining elements — agents, environments, rewards, and policies — support decision-making in settings where actions have delayed and uncertain consequences. The central challenge is to learn a policy that maximizes cumulative reward over time rather than just immediate payoff.

Understanding RL basics means understanding how return, value, and policy interact inside the Markov Decision Process framework. It also means recognizing the practical difficulty of exploration, delayed credit assignment, and reward design. These basic ideas are the foundation for more advanced topics such as temporal-difference learning, Q-learning, policy gradients, actor-critic methods, model-based planning, and deep reinforcement learning.