Machine learning systems are not defined by code alone. They are defined by the combination of code, data, features, hyperparameters, environment, model artifacts, evaluation results, and deployment lineage. Versioning these components is essential for reproducibility, auditability, experimentation, rollback, and operational trust. This whitepaper explains the technical foundations of data and model versioning, with a special focus on DVC and MLflow.

Abstract

In traditional software engineering, source code version control systems such as Git make it possible to track changes, reproduce releases, collaborate safely, and revert mistakes. Machine learning systems, however, introduce additional stateful assets that are often much larger and more dynamic than code: datasets, feature tables, model checkpoints, experiment metrics, training configurations, and deployment metadata. This paper explains why code-only versioning is insufficient for ML, and how modern MLOps practices extend version control to data and models. It covers concepts such as lineage, reproducibility, experiment tracking, artifact registries, immutable references, staged promotion, and governance. It then explains how DVC supports data and pipeline versioning, and how MLflow supports experiment tracking, model packaging, and model registry workflows. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Let a trained model artifact be denoted by

M. In a realistic ML system,

M is a function not only of source code, but of:

- training dataset

D - feature transformation logic

φ - hyperparameters

λ - random seed

s - training code version

C - runtime environment

E

Conceptually, the resulting model may be written as:

M = Train(D, φ, λ, s, C, E).

If any of these inputs change, the resulting model may differ. This is why robust ML systems require versioning beyond code alone.

2. Why Code Versioning Alone Is Not Enough

Git works extremely well for source code and small configuration files, but machine learning introduces additional challenges:

- datasets are large and change over time

- model files can be large binary artifacts

- metrics and parameters from experiments must be compared systematically

- the same code can produce different models under different data or hyperparameters

- deployed models need stage control and rollback logic

Therefore, ML versioning requires artifacts and metadata that Git alone does not manage conveniently.

3. Reproducibility as a Versioning Objective

One of the primary goals of data and model versioning is reproducibility. Ideally, given a reference to a prior run, one should be able to reconstruct:

- which code version was used

- which exact dataset snapshot was used

- which hyperparameters were used

- which model artifact was produced

- which metrics were observed

If two runs use the same full specification

(D, φ, λ, s, C, E),

then reproducibility seeks:

Train(D, φ, λ, s, C, E) ≈ Train(D, φ, λ, s, C, E).

In practice, deterministic equivalence may depend on hardware and numerical nondeterminism, but strong versioning greatly reduces uncertainty.

4. Lineage

Lineage is the traceable chain connecting inputs, transformations, outputs, and downstream usage. For a model

artifact M, lineage should answer:

- which dataset version produced it

- which feature pipeline produced the training table

- which training run and configuration produced the artifact

- which evaluation metrics justified its promotion

- which deployment endpoint or batch job uses it

Strong lineage supports debugging, audits, rollback, and regulatory accountability.

5. Data Versioning Basics

Data versioning means maintaining identifiable snapshots or references to datasets over time. Let the evolving dataset

be:

D(1), D(2), ..., D(t).

A versioned ML pipeline should know exactly which dataset snapshot

D(t) was used in a given experiment.

Dataset changes may include:

- new rows

- corrected values

- schema changes

- new labels

- feature recalculations

6. Model Versioning Basics

Model versioning means assigning persistent identifiers to trained model artifacts and their associated metadata. A model version should not be treated as just a filename. It should be tied to:

- training run ID

- dataset version

- code commit

- hyperparameters

- environment specification

- evaluation metrics

- deployment stage

One may think of a model version as:

VM = (artifact, metadata, lineage, stage).

7. Immutable vs Mutable References

Good versioning systems distinguish immutable version identifiers from mutable aliases. For example:

- immutable: exact model version 17, exact dataset hash, exact run ID

- mutable: “production”, “staging”, “latest”, “candidate”

Immutable references are necessary for exact reproducibility. Mutable aliases are convenient for operational routing.

8. Experiment Tracking

Experiment tracking is the structured recording of training runs, including:

- parameters

- metrics

- artifacts

- notes or tags

- source commit references

If run r produces metrics

m1, m2, ..., mk,

experiment tracking stores:

Run(r) = (λ, metrics, artifacts, lineage).

This enables comparison, selection, and reproducibility across many trials.

9. Artifact Storage

Model artifacts are often large binary objects such as:

- serialized tree ensembles

- deep learning checkpoints

- tokenizers and preprocessors

- feature encoders

- evaluation reports

Versioning systems therefore typically store metadata in one place and large artifacts in dedicated remote storage such as object stores or artifact repositories.

10. Data Lineage and Feature Lineage

If the processed training table is:

X = φ(D),

then both the dataset version and the transformation version matter. Two training runs using the same raw data but

different feature logic are not equivalent.

Therefore, versioning should include:

- raw data snapshot reference

- processing pipeline version

- feature schema version

- materialized feature table version if applicable

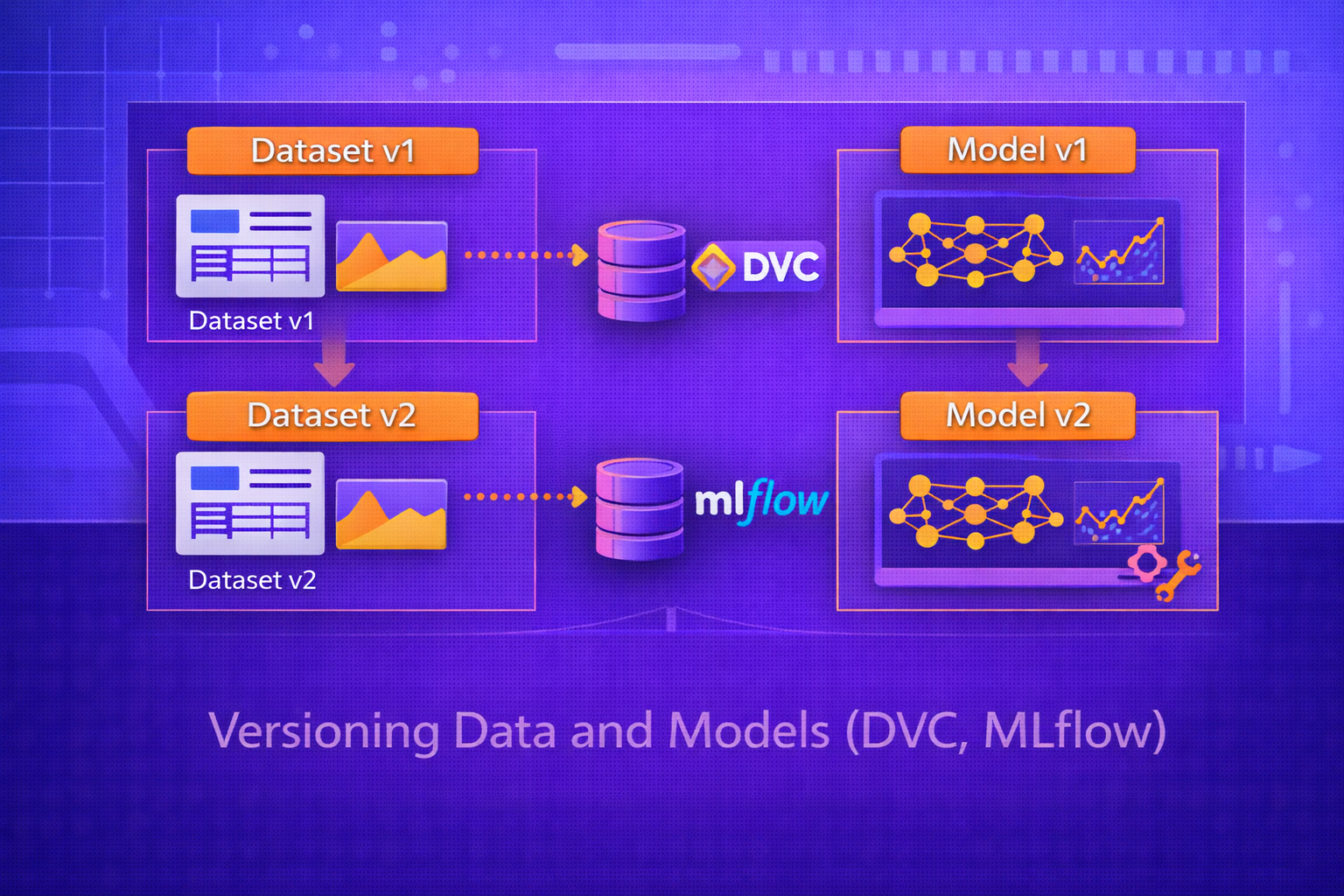

11. DVC: Data Version Control

DVC is a tool designed to bring Git-like principles to data, pipelines, and ML artifacts. It does not replace Git; rather, it complements Git by versioning large files and pipeline dependencies through lightweight metadata stored in the repository, while actual data is stored in local or remote storage.

11.1 Core Idea of DVC

DVC tracks data artifacts using metadata files and content hashes. Instead of storing large files directly in Git,

DVC stores references to them. A file or directory version can therefore be identified by a content hash

h(D).

Conceptually:

Version(D) = hash(contents(D)).

If the underlying data changes, the hash changes, and the version reference changes.

11.2 DVC and Remote Storage

Actual data artifacts are usually stored in remote locations such as:

- S3-compatible object stores

- Azure Blob Storage

- Google Cloud Storage

- shared filesystems

Git stores the metadata pointers; DVC stores or retrieves the large artifacts from remote storage.

11.3 DVC Pipeline Stages

DVC can also version pipelines as stages with explicit dependencies and outputs. A simplified stage representation includes:

- command to run

- input dependencies

- output artifacts

If stage S transforms input

A into output B, DVC records:

S : A → B.

When dependencies change, downstream stages can be recomputed systematically.

11.4 Reproducibility with DVC

Because DVC tracks both the data artifact references and the pipeline structure, it becomes possible to recreate previous training or processing states as long as the referenced data and code remain available.

12. MLflow

MLflow is a platform for managing the machine learning lifecycle, especially:

- experiment tracking

- artifact logging

- model packaging

- model registry workflows

While DVC is particularly strong in data and pipeline versioning, MLflow is particularly strong in run tracking and model lifecycle management.

13. MLflow Tracking

MLflow Tracking records each experiment run as a structured entity containing:

- parameters

- metrics

- tags

- artifacts

If run r uses hyperparameters

λ and produces metric vector

m, then MLflow conceptually stores:

Run(r) = (λ, m, artifacts, tags).

This makes it easy to compare experiments across many runs.

14. Parameter and Metric Logging

During training, one may log:

- learning rate

- regularization strength

- tree depth

- batch size

- accuracy, loss, F1, AUC, RMSE, or other metrics

If validation F1 for run r is

F1(r), a selection step may look for:

r* = argmaxr F1(r).

15. MLflow Artifacts

MLflow also stores artifacts such as:

- trained model files

- plots

- confusion matrices

- feature importance reports

- prediction samples

- evaluation JSON files

These are associated with the run so that outputs remain organized and auditable.

16. MLflow Models

MLflow defines a packaging format for models that includes both the model artifact and metadata describing how it can be served or loaded. This helps standardize deployment across different model flavors and runtimes.

A packaged model may be thought of as:

M = (weights, flavor, environment, signature, metadata).

17. MLflow Model Registry

The MLflow Model Registry provides lifecycle management for versioned models. A registered model may have multiple versions and promotion stages such as:

- None or candidate

- Staging

- Production

- Archived

This helps teams manage approval flows, rollback, and operational state transitions.

18. DVC vs MLflow

DVC and MLflow solve overlapping but distinct problems.

18.1 DVC Strengths

- data versioning

- pipeline dependency tracking

- Git-integrated reproducibility

- large artifact references via remote storage

18.2 MLflow Strengths

- experiment tracking

- metric comparison across runs

- artifact logging

- model packaging and registry workflows

18.3 Complementary Usage

In practice, many teams use them together. DVC can track datasets and processing pipeline versions, while MLflow can track training runs, metrics, and model promotion history.

19. Versioning Granularity

Effective ML versioning often requires separate but connected identifiers for:

- raw dataset snapshot

- processed dataset snapshot

- feature definition version

- training code commit

- experiment run ID

- model version ID

- deployment version

Granularity matters because different failure modes require rollback at different layers.

20. Model Promotion and Governance

Not every trained model should become a deployed model. A robust workflow uses promotion gates such as:

- metric thresholds

- bias or fairness checks

- latency tests

- drift simulation

- human approval

If candidate model Mnew must outperform current production model

Mprod, one may require:

Score(Mnew) > Score(Mprod) + δ,

where δ is a meaningful margin.

21. Rollback

Versioning is only truly valuable if rollback is possible. When a deployed model underperforms or causes operational

issues, teams should be able to return quickly to a previous trusted version:

Mprod := Mold.

This requires that old artifacts, metadata, and compatible preprocessing logic all remain accessible.

22. Environment Versioning

Reproducing a model requires more than code and data. It also requires environment consistency:

- Python version

- library versions

- CUDA or hardware dependencies

- OS-level dependencies

If the runtime environment is denoted by E, then model reproducibility depends on

versioning E alongside code and data.

23. Auditability

In regulated or high-stakes environments, teams may need to answer questions such as:

- which data trained the deployed model on a given date

- what metrics justified promotion

- who approved the promotion

- which feature transformations were active

Good versioning systems make such audit trails possible.

24. Common Failure Modes Without Proper Versioning

- unable to reproduce a model that is already in production

- unclear difference between two seemingly similar runs

- data drift unnoticed because dataset snapshots were not tracked

- inference errors because preprocessing version mismatched training

- rollback impossible because artifacts were overwritten

- team confusion about which model is authoritative

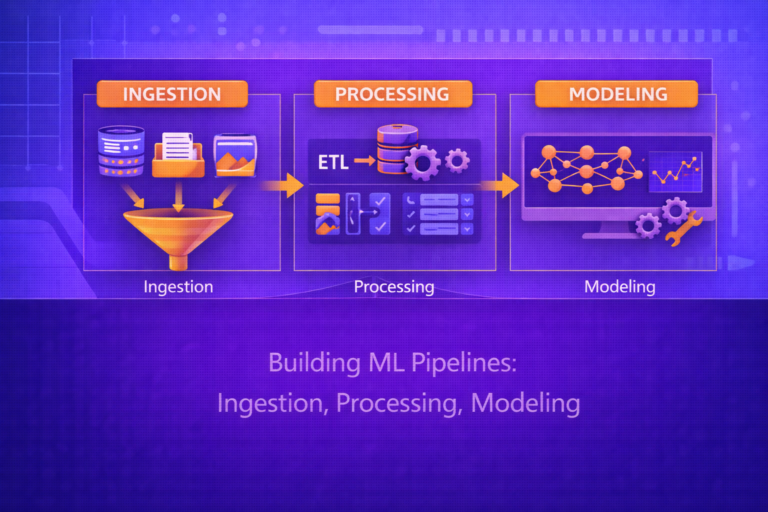

25. Practical MLOps Pattern

A practical versioning workflow often looks like this:

- Git tracks code and lightweight config

- DVC tracks datasets and pipeline outputs

- MLflow tracks training runs and model artifacts

- Model registry manages promotion and deployment stages

This creates a layered, traceable lifecycle from raw data to production model.

26. Evaluation Metrics and Versioned Comparison

Model versioning is only useful if versions can be compared objectively. Standard metrics include:

Accuracy = (TP + TN)/(TP + TN + FP + FN),

Precision = TP/(TP + FP),

Recall = TP/(TP + FN),

F1 = 2(Precision × Recall)/(Precision + Recall),

and regression metrics such as RMSE.

Comparison should also include:

- latency

- model size

- resource cost

- fairness metrics

- robustness metrics

27. Strengths of DVC

- strong Git-compatible data versioning workflow

- pipeline dependency tracking

- reproducible artifact retrieval

- suitable for teams already using Git-centric workflows

28. Strengths of MLflow

- excellent experiment tracking

- strong run comparison and metric logging

- model packaging standardization

- clear model registry workflow for staging and production

29. Limitations and Trade-Offs

- DVC is not a full model registry by itself

- MLflow does not replace full data versioning systems

- versioning discipline still depends on process design and team practice

- large-scale lineage across many systems may require broader platform integration

30. Best Practices

- Version data, code, features, environment, and model artifacts together—not separately in isolation.

- Use immutable identifiers for reproducibility and mutable aliases only for operational convenience.

- Track experiment parameters and metrics for every serious run.

- Use a model registry for promotion workflows and rollback readiness.

- Ensure feature logic used in inference is version-aligned with training.

- Preserve lineage from source data to deployed endpoint.

31. Conclusion

Versioning data and models is foundational to modern machine learning engineering because an ML system is defined by much more than source code. Training data, feature logic, hyperparameters, environments, artifacts, and evaluation outputs all shape the final model and must be tracked if the system is to be reproducible, auditable, and maintainable.

DVC and MLflow address different but complementary parts of this challenge. DVC extends version control principles to large data artifacts and pipeline dependencies. MLflow supports experiment tracking, model packaging, and registry workflows for controlled promotion. Together, they help transform ML development from an ad hoc experimental activity into a disciplined lifecycle with lineage, comparison, rollback, and governance. In modern MLOps, versioning is not optional infrastructure—it is one of the core conditions for trustworthy machine learning.