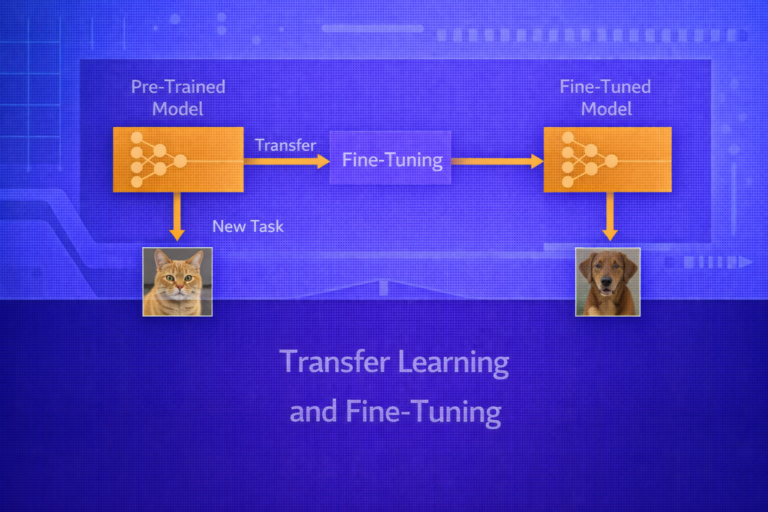

Transfer Learning and Fine-Tuning

Transfer learning is one of the most important practical ideas in modern machine learning. Rather than training a model from scratch for every new task, transfer learning reuses knowledge learned from a source task to improve learning on a target…