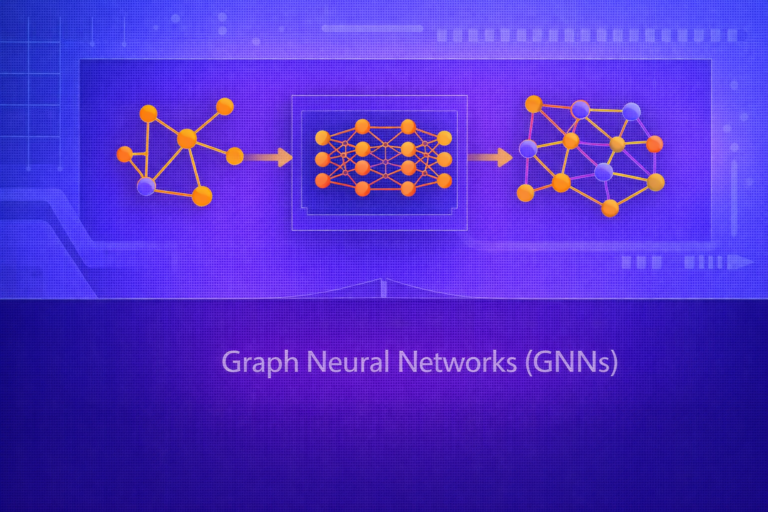

Graph Neural Networks (GNNs)

Graph Neural Networks (GNNs) are a family of neural architectures designed to operate on graph-structured data. Unlike standard machine learning models that assume independent samples or regular Euclidean grids, GNNs explicitly model entities and their relationships using nodes, edges, neighborhoods,…