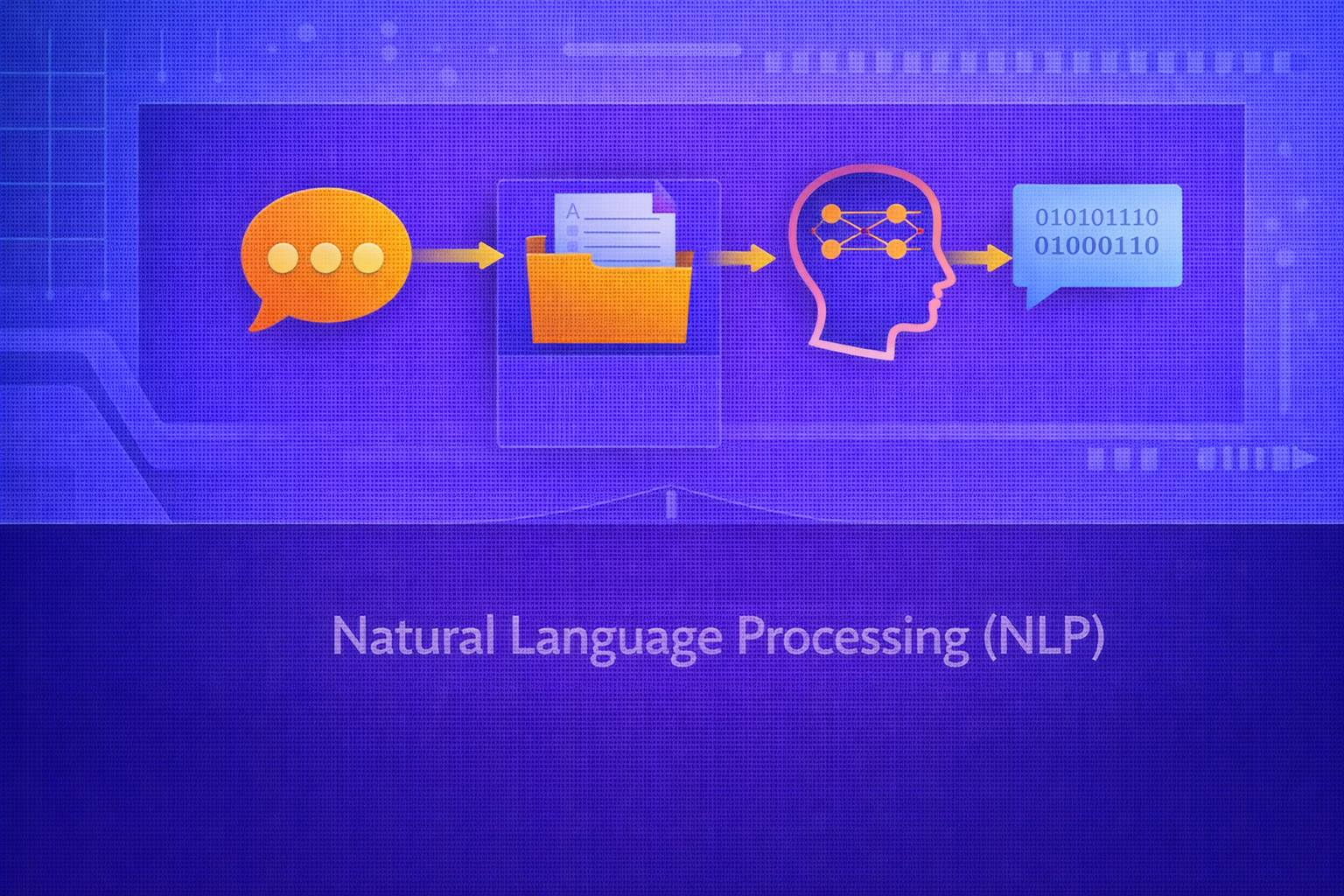

Natural Language Processing (NLP) is the field of artificial intelligence and computational linguistics concerned with enabling machines to process, represent, understand, generate, and interact through human language. It spans rule-based systems, statistical language models, machine learning pipelines, deep neural architectures, and modern foundation models. This whitepaper provides a technical overview of NLP, including its linguistic foundations, representations, probabilistic modeling, neural methods, evaluation metrics, and core application categories.

Abstract

NLP lies at the intersection of computer science, statistics, machine learning, and linguistics. Unlike purely numerical domains, language is discrete, ambiguous, compositional, context-sensitive, and deeply dependent on syntax, semantics, pragmatics, and world knowledge. This paper explains how text is represented computationally, how language models assign probabilities to sequences, how NLP tasks are framed in supervised, unsupervised, and generative settings, and how modern neural architectures transformed the field. It also covers tokenization, vector-space representations, sequence modeling, transformers, pretraining, evaluation metrics, and key challenges such as ambiguity, long-context reasoning, bias, and robustness. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Human language is a symbolic, structured, and context-dependent medium. NLP attempts to build systems that operate on

language data such as text or speech transcripts. Let a text sequence be represented as

x = (w1, w2, ..., wT),

where each wt is a token and T is sequence

length.

The computational challenge is to map such symbolic sequences into representations and algorithms that support tasks such as classification, tagging, translation, question answering, summarization, search, dialogue, and text generation.

2. Why NLP Is Difficult

NLP is difficult because language is not just a string of words. It contains ambiguity at multiple levels:

- Lexical ambiguity: a word may have multiple meanings

- Syntactic ambiguity: a sentence may admit multiple parses

- Semantic ambiguity: meaning depends on composition and context

- Pragmatic ambiguity: intent depends on speaker goals and world knowledge

Additionally, language is sparse, highly variable, multilingual, noisy in real-world data, and dependent on cultural and contextual conventions.

3. Linguistic Levels in NLP

NLP systems often operate across multiple linguistic levels:

- Morphology: internal structure of words

- Syntax: sentence structure and grammatical relations

- Semantics: meaning of words, phrases, and sentences

- Pragmatics: meaning in context and communicative intent

- Discourse: coherence across multiple sentences

Different NLP tasks emphasize different levels of this hierarchy.

4. Text as Data

Unlike images or sensor measurements, text starts as discrete symbols rather than continuous numeric values. Before statistical or neural models can operate on text, it must be transformed into structured numerical representations.

A corpus may be represented as a collection of documents:

D = {d1, d2, ..., dN},

where each document is a sequence of tokens or subword units.

5. Preprocessing in Classical NLP

Traditional NLP pipelines often use preprocessing steps such as:

- lowercasing

- tokenization

- stopword removal

- stemming

- lemmatization

- sentence segmentation

While modern neural systems often reduce explicit preprocessing, these steps still matter in many settings.

6. Tokenization

Tokenization splits text into units such as words, subwords, or characters. If a sentence is

x = "The cat sat", tokenization might yield:

(The, cat, sat).

Modern NLP frequently uses subword tokenization such as Byte Pair Encoding (BPE), WordPiece, or unigram language models. These methods balance vocabulary size against the ability to represent rare and morphologically complex words.

7. Vocabulary and One-Hot Encoding

Suppose the vocabulary has size V. A token can be represented as a one-hot vector

x ∈ {0,1}V with exactly one nonzero position. This representation is simple

but sparse and does not capture semantic similarity.

For example, “cat” and “dog” are equally distant from “quantum” in one-hot space, even though semantically they are much closer.

8. Bag-of-Words Representation

A classical text representation is bag-of-words (BoW), where a document is represented by token counts:

xd = [c1, c2, ..., cV].

This ignores word order but provides a simple vector-space representation useful for document classification and retrieval.

9. TF-IDF

Term Frequency–Inverse Document Frequency (TF-IDF) weights terms by both their within-document frequency and their rarity across the corpus.

A common formulation is:

tfidf(t,d) = tf(t,d) · idf(t),

where

idf(t) = log(N / df(t)).

Here, N is the number of documents and df(t) is the number

of documents containing term t. TF-IDF is effective for many classical NLP tasks,

especially retrieval and sparse linear classification.

10. Distributed Word Representations

Modern NLP moved beyond sparse symbolic vectors toward dense embeddings. A word embedding maps a token into a dense

vector:

e(w) ∈ ℝd.

These vectors capture semantic and syntactic regularities because words appearing in similar contexts receive similar embeddings.

10.1 Distributional Hypothesis

A foundational principle is the distributional hypothesis: words that occur in similar contexts tend to have similar meanings. This idea underlies many embedding methods.

11. Word2Vec and Embedding Learning

Word2Vec introduced efficient neural methods for learning word vectors. In the skip-gram formulation, the model

predicts surrounding context words given a center word. If the center word is w and a

context word is c, a simplified objective is to maximize:

log P(c | w).

With a softmax parameterization:

P(c|w) = exp(vcT uw) / Σc' exp(vc'T uw),

where uw and vc are embedding

vectors.

12. Contextual Embeddings

Static embeddings assign one vector per word type. This cannot capture polysemy well. For example, the word “bank” has different meanings in “river bank” and “bank loan.”

Contextual embeddings solve this by generating token representations that depend on surrounding context:

ht = f(w1, ..., wT, t).

Models such as ELMo, BERT, and transformer-based language models produce such context-sensitive embeddings.

13. Language Modeling

A language model assigns probability to token sequences. By the chain rule:

P(w1, ..., wT) = Πt=1T P(wt | w1, ..., wt-1).

Language modeling is fundamental because many NLP tasks can be framed as conditional sequence prediction.

13.1 N-gram Models

Classical language models approximate the full history using only the previous n-1

tokens:

P(wt | w1:t-1) ≈ P(wt | wt-n+1:t-1).

N-gram models are simple but suffer from data sparsity and limited context.

14. Sequence Models in NLP

Many NLP problems involve sequence modeling, including:

- part-of-speech tagging

- named entity recognition

- speech transcription

- machine translation

- language modeling

Sequence models such as RNNs, LSTMs, GRUs, and transformers are designed to capture dependencies across tokens.

15. Recurrent Approaches

Recurrent neural networks model token sequences by updating a hidden state:

ht = φ(Wxt + Uht-1 + b).

LSTMs and GRUs improve long-range sequence modeling through gating mechanisms. These architectures were foundational in NLP before transformers became dominant.

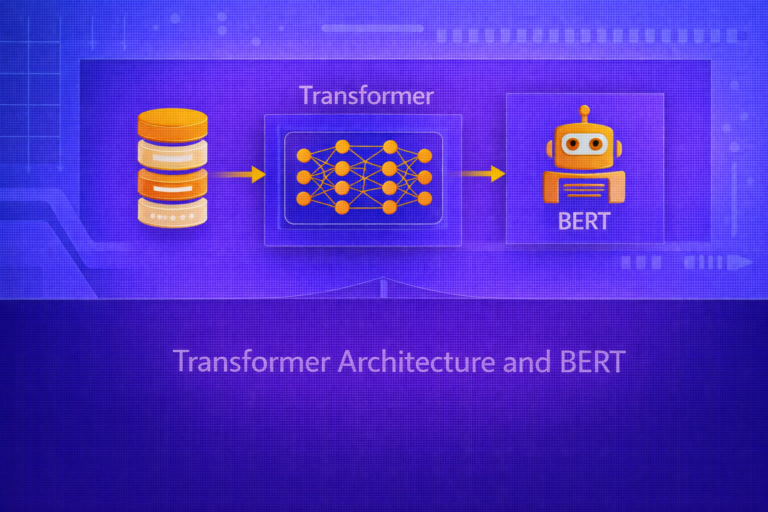

16. The Transformer Architecture

Transformers replaced recurrence with self-attention, enabling better parallelization and stronger long-context

modeling. The core attention mechanism computes:

Attention(Q,K,V) = softmax(QKT / √dk) V.

Here:

Q: queriesK: keysV: valuesdk: key dimension for scaling

Self-attention allows each token to attend directly to other tokens in the sequence, which dramatically changed NLP.

17. Positional Information

Because self-attention alone is permutation-invariant, transformers need positional information. This is often added

through positional encodings or learned positional embeddings:

x't = e(wt) + pt,

where pt represents position.

18. Pretraining and Fine-Tuning in NLP

Modern NLP often uses large-scale pretraining followed by task-specific fine-tuning. During pretraining, a model learns from massive text corpora using self-supervised objectives such as:

- next-token prediction

- masked language modeling

- sequence denoising

The pretrained model is then adapted to tasks such as classification, QA, summarization, or generation.

19. Common NLP Task Categories

19.1 Text Classification

Assign a label to a sequence or document, such as sentiment, topic, spam, or intent.

19.2 Sequence Labeling

Assign a label to each token, such as POS tagging or named entity recognition.

19.3 Machine Translation

Map a source-language sequence into a target-language sequence:

P(y | x).

19.4 Question Answering

Answer a question given a passage or broader knowledge source, often via span prediction or generation.

19.5 Summarization

Generate a shorter sequence preserving salient content from a longer text.

19.6 Information Retrieval and Search

Rank documents or passages according to their relevance to a query.

19.7 Dialogue and Text Generation

Generate coherent responses or longer-form continuations conditioned on conversation history or prompts.

20. Supervised NLP Objectives

For classification with logits z, softmax outputs:

ŷk = ezk / Σj=1K ezj.

Cross-entropy loss is:

L = - Σk=1K yk log ŷk.

For token-level tasks, this may be summed over positions:

L = - Σt=1T Σk=1K yt,k log ŷt,k.

21. Generative NLP Objectives

In generative models, training often maximizes conditional next-token likelihood:

L = - Σt=1T log P(wt | w<t).

This is equivalent to minimizing sequence-level negative log-likelihood.

22. Evaluation Metrics in NLP

22.1 Classification Metrics

Standard supervised metrics include:

Accuracy = (TP + TN)/(TP + TN + FP + FN),

Precision = TP/(TP + FP),

Recall = TP/(TP + FN), and

F1 = 2(Precision × Recall)/(Precision + Recall).

22.2 Perplexity

For language models, perplexity is a standard metric:

Perplexity = exp[- (1/T) Σt=1T log P(wt | w<t)].

Lower perplexity indicates better predictive fit to the sequence distribution.

22.3 BLEU

Machine translation and generation tasks often use BLEU, which measures n-gram overlap with reference outputs. Although widely used historically, BLEU has limitations because surface overlap does not fully capture meaning.

22.4 ROUGE

Summarization often uses ROUGE, which measures overlap between generated and reference summaries in terms of n-grams or longest common subsequences.

22.5 Semantic Metrics

More modern metrics often compare embeddings or semantic similarity rather than raw token overlap, especially for generation tasks.

23. Ambiguity and Context Dependence

NLP systems must handle ambiguity that often cannot be resolved from local token identity alone. Contextual modeling is therefore central. The same word may change meaning depending on nearby words, sentence structure, document topic, or conversational context.

24. Long-Range Dependencies

Some language phenomena depend on information far earlier in the sequence, such as coreference, discourse relations, or topic consistency. Architectures that handle long-range dependencies well are therefore especially valuable in NLP.

25. Multilingual NLP

NLP becomes more complex across languages because languages differ in morphology, syntax, word order, writing systems, tokenization behavior, and data availability. Multilingual models attempt to share representations across languages, often leveraging transfer learning from high-resource languages to low-resource ones.

26. Retrieval-Augmented and Knowledge-Grounded NLP

Some NLP systems do not rely only on parametric model memory. Instead, they retrieve external documents or knowledge and condition generation or prediction on that retrieved context. This helps with factual grounding and domain adaptation.

27. Robustness, Bias, and Safety

NLP systems can inherit biases from training data, fail under distribution shift, hallucinate unsupported facts, or behave unpredictably under adversarial prompts and noisy input. Robustness, fairness, privacy, and safety are therefore central engineering concerns, not afterthoughts.

28. Practical Applications of NLP

- search and document retrieval

- machine translation

- chatbots and conversational AI

- sentiment analysis and customer feedback mining

- summarization and information extraction

- code generation and software assistance

- legal, biomedical, and enterprise text processing

29. Strengths of Modern NLP

- strong contextual understanding from pretrained models

- transfer learning across tasks and domains

- powerful sequence generation capabilities

- ability to operate over unstructured text at scale

30. Limitations of NLP Systems

- language ambiguity remains fundamentally hard

- surface fluency does not guarantee factual correctness

- models can be biased by training data

- long-context reasoning and grounding remain difficult

- evaluation is task-dependent and often imperfect

31. Best Practices

- Choose tokenization and representation methods appropriate to the language and task.

- Use pretrained models when data is limited.

- Match evaluation metrics to the actual business or linguistic goal.

- Validate robustness on noisy, shifted, and edge-case inputs.

- Use grounding or retrieval when factual precision matters.

- Be cautious when interpreting surface fluency as true understanding.

32. Conclusion

Natural Language Processing is the computational study of language as data, structure, and interaction. It combines linguistic insight with probabilistic modeling, machine learning, and deep representation learning to solve tasks ranging from document classification to open-ended generation. Over time, NLP evolved from rule-based systems and sparse vector methods to deep contextual models and transformer-based foundation architectures.

A strong understanding of NLP requires more than familiarity with algorithms. It requires awareness of how language differs from other data types, why representation matters, how context changes meaning, and why evaluation in NLP is inherently nuanced. These foundations are essential for understanding both classical NLP pipelines and modern large language model systems.