AI governance and auditing are the organizational and technical disciplines used to ensure that AI systems are designed, deployed, and operated within defined legal, ethical, operational, and business boundaries. Governance establishes policies, accountability, controls, and decision rights. Auditing evaluates whether those controls are working in practice and whether the AI system’s behavior, documentation, and lifecycle evidence are consistent with stated requirements. This whitepaper explains the foundations, control structures, and technical mechanisms behind AI governance and auditing.

Abstract

As AI systems move from experimentation into high-impact production settings, organizations need more than model performance. They need structured governance over data use, model risk, human oversight, fairness, privacy, security, change management, incident response, and lifecycle accountability. They also need auditable evidence that AI systems were reviewed, validated, monitored, and controlled appropriately. This paper explains AI governance and auditing as complementary disciplines. Governance defines the rules, roles, approval paths, escalation logic, and control objectives for AI systems. Auditing tests those rules and verifies whether system behavior and evidence actually satisfy them. The paper covers AI inventories, risk classification, policy frameworks, model lifecycle controls, documentation requirements, lineage, monitoring, control testing, internal and external audit patterns, and common operating models for enterprise AI governance. All formulas are embedded inline in HTML-friendly format for direct use in WordPress or similar editors.

1. Introduction

Let an AI system be represented as:

S = (D, φ, M, C, P, U, O),

where:

Dis data and data governance contextφis transformation and feature logicMis the model or decision logicCis the control framework applied to itPis deployment and operational processUis user and usage contextOis oversight and ownership structure

AI governance defines how S should be controlled. AI auditing examines whether those

controls are adequate, implemented, and effective.

2. Why AI Governance Is Necessary

AI systems create risks that are broader than ordinary software risk because they can:

- change behavior when data changes

- produce non-deterministic or probabilistic outputs

- embed hidden biases or proxy effects

- depend on complex third-party models or services

- affect people, rights, safety, money, or public trust

- drift after deployment without code changes

Governance is necessary because these systems need clear ownership, control, evidence, and escalation mechanisms before and after deployment.

3. Governance vs Auditing

Governance and auditing are closely related but not identical.

- Governance defines policy, risk appetite, roles, controls, approval gates, and monitoring expectations.

- Auditing evaluates whether governance is appropriately designed and whether actual practice conforms to it.

Governance asks, “What controls should exist?” Auditing asks, “Did those controls exist, operate, and produce reliable evidence?”

4. Core Governance Objectives

AI governance usually aims to ensure that systems are:

- lawful and policy-compliant

- fit for purpose

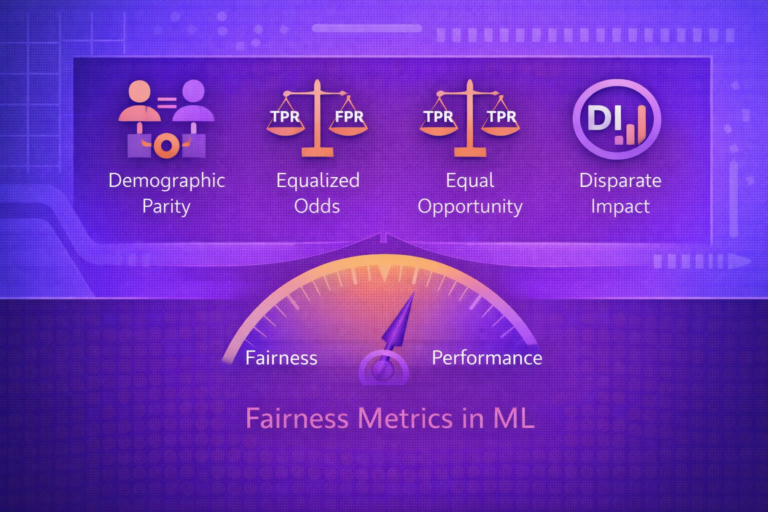

- fair and non-discriminatory where required

- privacy-preserving

- secure and misuse-resistant

- reliable and monitorable

- accountable and auditable

5. Risk-Based Governance

Not all AI systems require the same level of control. Governance commonly uses a risk-based approach. If system risk

is represented as R(S), then governance intensity may be scaled so that:

ControlStrength(S) = g(R(S)).

Higher-risk systems receive stronger review, documentation, monitoring, and approval requirements.

6. AI Risk Classification

A typical governance program classifies systems by criteria such as:

- impact on individuals or groups

- safety relevance

- use of sensitive data

- regulatory exposure

- degree of automation

- model complexity and opacity

- external user exposure

This allows differentiated governance rather than a single control template for every use case.

7. AI Inventory and System Registry

A foundational governance practice is maintaining an inventory of AI systems. An AI registry may track:

- system name and owner

- purpose and domain

- risk classification

- data sources

- model type

- deployment status

- review and approval history

Without inventory, governance becomes fragmented because the organization cannot even reliably identify what must be governed.

8. Roles and Accountability

Governance requires clear assignment of responsibilities. Common accountable roles may include:

- system owner

- model developer

- data owner

- risk owner

- security reviewer

- privacy reviewer

- approving authority or governance board

If responsibility is diffuse, auditability and incident handling weaken quickly.

9. Policy Frameworks

Governance depends on written policy. A policy framework may define:

- acceptable and prohibited uses

- documentation requirements

- review triggers

- testing standards

- human oversight rules

- monitoring obligations

- incident escalation paths

A policy becomes operational only when it is translated into enforceable control points.

10. Lifecycle Control Points

AI governance is strongest when mapped to lifecycle stages:

- use-case initiation

- data acquisition and preparation

- training and experimentation

- evaluation and validation

- deployment approval

- monitoring and maintenance

- retirement or decommissioning

At each stage, specific evidence and approvals may be required.

11. Documentation Requirements

AI governance depends heavily on documentation. Typical required artifacts include:

- use-case description

- risk assessment

- dataset description

- model card or system card

- evaluation report

- fairness and safety assessment

- deployment approval record

- monitoring plan

Documentation converts AI development from an opaque activity into an auditable process.

12. Data Lineage and Model Lineage

Governance and auditing require traceability. If model artifact is:

M = Train(D, φ, λ, E, C),

then lineage should reveal:

- which dataset version

Dwas used - which feature logic

φwas used - which hyperparameters

λwere used - which environment

Eand code versionCwere used

Without lineage, the system cannot be reliably audited or reproduced.

13. Validation Governance

Governance should define what counts as acceptable validation. Depending on the use case, validation may include:

- performance thresholds

- subgroup fairness review

- robustness testing

- privacy and security review

- human factors and usability checks

- calibration and uncertainty assessment

A deployment gate may be framed as:

Deploy(S) only if RiskResidual(S) ≤ τ,

where τ is the acceptable residual risk threshold.

14. Approval and Sign-Off Workflow

High-impact AI systems should not be deployed without formal approval. Governance may require staged sign-offs from technical, risk, legal, privacy, and business stakeholders depending on risk level.

A mature organization distinguishes between development readiness and production approval.

15. Human Oversight Controls

Governance should specify whether a system can operate autonomously or requires human review. Typical controls include:

- manual approval for high-risk outputs

- override capability

- appeal or correction mechanisms

- review queues for uncertain cases

- restricted autonomy in sensitive contexts

Auditors later examine whether these controls existed and were actually used.

16. Monitoring Governance

Governance does not end at launch. It should define what must be monitored in production, such as:

- service reliability

- data drift

- performance degradation

- fairness drift

- safety incidents

- security misuse patterns

If monitored metric is M(t), governance should define action thresholds such as:

if M(t) > τ then escalate

or

if M(t) < τ then pause or review.

17. Change Management

AI systems can change due to:

- model retraining

- data source changes

- prompt or instruction changes

- feature engineering modifications

- vendor model updates

- threshold changes

Governance should define which changes are minor and which require revalidation or renewed approval.

18. Third-Party and Vendor Governance

Many organizations use external models, APIs, or pretrained systems. Governance must therefore address third-party risk, including:

- vendor documentation quality

- data handling by providers

- model update transparency

- security and privacy posture

- contractual and regulatory obligations

A system can be high-risk even if built mostly from vendor components.

19. Internal Audit Objectives

Internal AI audits commonly ask:

- Was the system registered and risk-classified?

- Were required approvals obtained?

- Was validation evidence complete and credible?

- Were monitoring controls implemented and reviewed?

- Were incidents handled according to policy?

- Is documentation sufficient to reproduce and explain the system?

20. Audit Evidence

AI auditing depends on evidence, not declarations. Examples of auditable evidence include:

- system inventory entries

- approval records

- dataset versions and lineage logs

- test and validation reports

- deployment manifests

- monitoring dashboards and alerts

- incident tickets and remediation records

21. Design Effectiveness vs Operating Effectiveness

Auditing usually distinguishes between:

- design effectiveness: whether the control is well-designed to address the risk

- operating effectiveness: whether the control actually operated as intended over time

A policy can look good on paper yet fail operationally if teams bypass it or evidence is incomplete.

22. Sampling and Testing in Audits

Auditors often test a sample of AI systems, changes, or incidents. If total governed systems are

N and audit sample size is n, then the audit assesses a

subset while trying to infer control reliability across the broader population.

For high-risk systems, full review rather than sampling may be appropriate.

23. Quantitative Audit Signals

Some governance programs use measurable control indicators such as:

- percentage of AI systems registered

- percentage with completed risk assessment

- percentage with subgroup evaluation

- mean remediation time for incidents

- monitoring coverage ratio

For example, a control completion ratio may be:

CCR = (# compliant systems) / (# in-scope systems).

24. Auditability of Generative AI

Generative AI adds governance and audit challenges because systems may be:

- non-deterministic

- prompt-dependent

- tool-augmented

- updated by vendors without transparent weights access

Auditing such systems often requires prompt libraries, red-team evidence, response logging, tool-call traceability, and control over prompt or policy changes.

25. Governance Boards and Review Committees

Many enterprises establish an AI governance board or review committee that handles:

- high-risk use-case approval

- policy interpretation

- exception handling

- incident escalation

- cross-functional coordination

These structures help convert abstract policy into real decision-making.

26. Regulatory and Policy Alignment

Governance programs often need to map internal controls to external requirements such as privacy law, sector rules, procurement obligations, or emerging AI regulation. Auditing then checks whether internal evidence supports those mappings in practice.

A useful operating pattern is to translate external obligations into explicit internal controls rather than relying on vague alignment claims.

27. Incident Response and Escalation

Governance should specify how AI incidents are classified, escalated, investigated, and closed. Common incident types include:

- unsafe outputs

- bias findings

- privacy breaches

- model drift causing harmful decisions

- security compromise or misuse

Auditors later test whether incident handling matched policy and whether remediation was effective.

28. Common Failure Modes in AI Governance

- no complete AI inventory

- risk classification performed inconsistently

- approval steps bypassed for speed

- monitoring defined but not implemented

- third-party AI adopted without proper review

- documentation too weak for reproducibility or audit

- ownership unclear during incidents

29. Strengths of Mature AI Governance

- improves accountability

- reduces uncontrolled deployment risk

- supports regulatory and policy readiness

- improves traceability and incident response

- creates decision clarity for high-risk systems

30. Limitations and Trade-Offs

- governance can become performative if evidence is weak

- overly heavy controls can slow innovation without improving outcomes

- audits can verify process better than they verify moral adequacy

- third-party and foundation-model dependencies complicate control boundaries

- policy quality matters as much as policy existence

31. Best Practices

- Maintain a living inventory of all in-scope AI systems.

- Apply governance proportionally using risk-based classification.

- Translate principles into explicit lifecycle controls and evidence requirements.

- Require lineage, validation evidence, and documented ownership before deployment.

- Audit both design effectiveness and operating effectiveness of controls.

- Include third-party and generative AI systems explicitly in governance scope.

- Connect monitoring, incident response, and change management into one continuous control loop.

32. Conclusion

AI governance and auditing are essential because AI systems create risks that cannot be managed by model metrics alone. Governance provides the control architecture: policies, roles, approval gates, monitoring requirements, and escalation mechanisms. Auditing provides the verification layer: evidence-based testing of whether those controls are well-designed and actually operating.

The central idea is that trustworthy AI requires both discipline and proof. It is not enough to claim that a system is fair, safe, or compliant; organizations must be able to show how it was governed, how it was tested, what evidence exists, what changed over time, and how issues are handled when things go wrong. Mature AI governance and auditing therefore turn AI from an opaque experimental capability into a controlled, reviewable, and accountable enterprise system.